AWS Notes: Load Balancers and Auto Scaling Groups

This is my Load Balancer notes. I’m covering AWS load balancers - what they are, different types, and how to use them.

Load balancers distribute incoming traffic across multiple EC2 instances (or other targets). This helps with high availability, fault tolerance, and scaling. Instead of one instance handling all traffic, multiple instances share the load.

AWS Load Balancer Types

AWS has 4 types of load balancers:

| Type | Layer | Use Case | Protocol |

|---|---|---|---|

| Application Load Balancer (ALB) | Layer 7 (HTTP/HTTPS) | Web applications, microservices | HTTP, HTTPS |

| Network Load Balancer (NLB) | Layer 4 (TCP/UDP) | High performance, low latency | TCP, UDP, TLS |

| Classic Load Balancer (CLB) | Layer 4/7 (legacy) | Legacy applications | HTTP, HTTPS, TCP, SSL |

| Gateway Load Balancer (GWLB) | Layer 3 (IP) | Network security appliances | IP |

Quick summary:

- ALB: Best for web apps, routing based on content (path, host, headers)

- NLB: Best for extreme performance, millions of requests per second

- CLB: Old version, don’t use for new projects

- GWLB: For deploying security appliances (firewalls, intrusion detection)

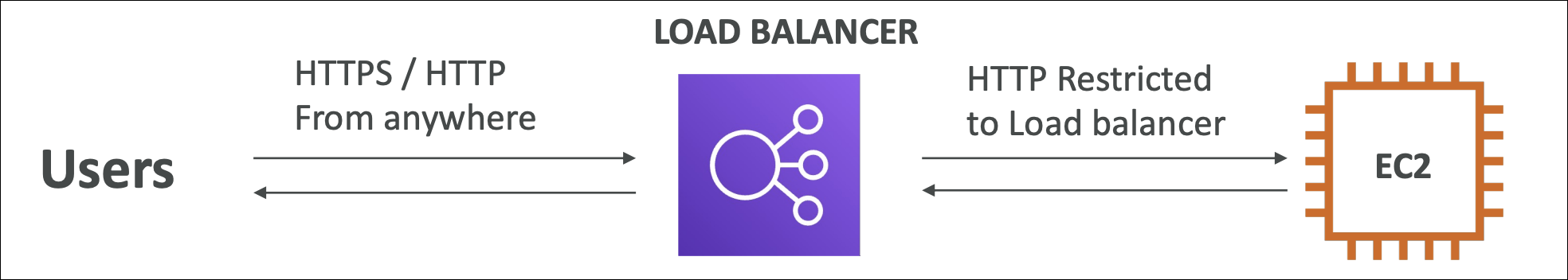

Security Groups

Security groups are important for load balancers. You need to configure them correctly for both the load balancer and the EC2 instances behind it.

Load Balancer Security Group

The load balancer’s security group should allow incoming traffic from the internet (or your VPC) on the ports your application uses.

Example for web application (ALB):

| Type | Protocol | Port | Source |

|---|---|---|---|

| Inbound | HTTP | 80 | 0.0.0.0/0 (or specific IPs) |

| Inbound | HTTPS | 443 | 0.0.0.0/0 (or specific IPs) |

| Outbound | All | All | 0.0.0.0/0 |

For production, consider restricting source IPs instead of

0.0.0.0/0for better security.

EC2 Instance Security Group

The EC2 instances behind the load balancer should NOT allow direct access from the internet. They should only accept traffic from the load balancer.

Example for EC2 instances:

| Type | Protocol | Port | Source |

|---|---|---|---|

| Inbound | HTTP | 80 | Load Balancer Security Group ID |

| Inbound | HTTPS | 443 | Load Balancer Security Group ID |

| Inbound | SSH | 22 | Your IP address (for management only) |

| Outbound | All | All | 0.0.0.0/0 |

Key points:

- EC2 instances should allow traffic only from the load balancer’s security group

- Don’t allow

0.0.0.0/0on application ports (80, 443) for EC2 instances

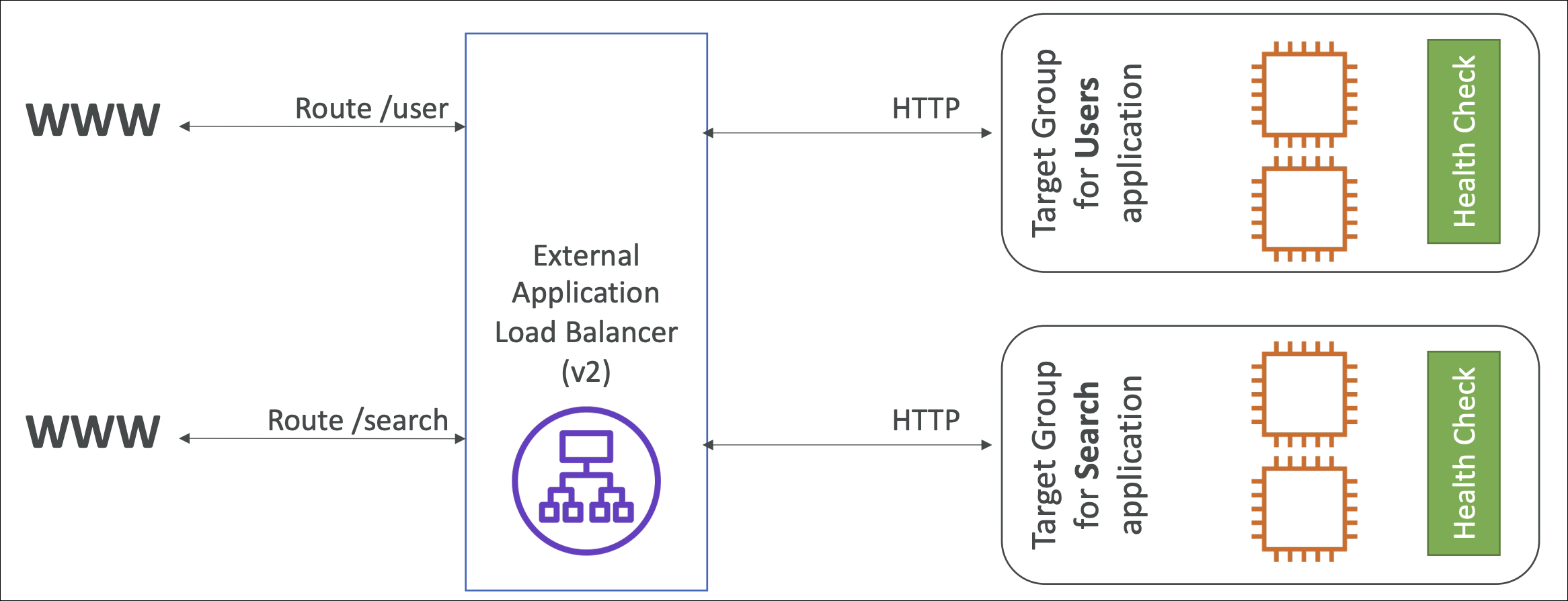

Application Load Balancer (ALB)

ALB is a Layer 7 load balancer. It works with HTTP and HTTPS traffic and can make routing decisions based on the content of the request (path, host, headers, query strings).

What ALB Can Do

Content-based routing:

- Route based on URL path (

/api/*→ API servers,/static/*→ static content) - Route based on host header (

api.example.com→ API,www.example.com→ web) - Route based on HTTP headers (custom headers, user-agent, etc.)

- Route based on query strings and source IP

SSL/TLS termination:

- ALB handles SSL certificates (install certificate on ALB, not on EC2)

- EC2 instances receive plain HTTP (easier to manage)

- Can use AWS Certificate Manager (ACM) for free SSL certificates

Health checks:

- Automatically checks if targets (EC2 instances) are healthy

- Removes unhealthy targets from rotation

- Brings them back when they become healthy

Sticky sessions (session affinity):

- Route same user to same instance (useful for sessions stored in memory)

Integration with AWS services:

- Can route to EC2 instances, ECS tasks, Lambda functions, IP addresses

- Can integrate with AWS WAF for web application firewall

- Can integrate with CloudWatch for monitoring

ALB Components

1. Load Balancer:

- The ALB itself (has DNS name, not static IP)

- Listens on ports (80 for HTTP, 443 for HTTPS)

- Distributes traffic to target groups

2. Listeners:

- Listen on specific port and protocol (HTTP or HTTPS)

- Can have multiple listeners (e.g., port 80 and 443)

- Each listener has rules for routing

3. Listener Rules:

- Define how to route traffic

- Rules are evaluated in priority order (1, 2, 3…)

- First matching rule is used

- Default rule forwards to default target group

4. Target Groups:

- Group of targets (EC2 instances, IPs, Lambda functions)

- Health checks are configured at target group level

- Can have multiple target groups for different routes

5. Targets:

- EC2 instances, IP addresses, Lambda functions, or ECS tasks

- Must be registered in a target group

Health Checks

ALB automatically checks if targets are healthy.

Health check settings:

- Protocol: HTTP or HTTPS

- Path: Health check endpoint (e.g.,

/health) - Port: Port to check (can be different from traffic port)

- Interval: How often to check (default 30 seconds)

- Timeout: How long to wait for response (default 5 seconds)

- Healthy threshold: Number of successful checks to mark healthy (default 2)

- Unhealthy threshold: Number of failed checks to mark unhealthy (default 2)

What happens:

- Healthy targets receive traffic

- Unhealthy targets are removed from rotation (no traffic sent)

- When unhealthy target becomes healthy again, it’s added back

SSL/TLS Termination

ALB can handle SSL certificates, so your EC2 instances don’t need to.

How it works:

- Client connects to ALB using HTTPS (port 443)

- ALB terminates SSL (decrypts the request)

- ALB forwards plain HTTP to EC2 instances (or HTTPS if you want)

- EC2 instances respond with plain HTTP

- ALB encrypts the response and sends HTTPS back to client

Benefits:

- Easier certificate management (install once on ALB, not on every instance)

- Can use AWS Certificate Manager (ACM) for free SSL certificates

- EC2 instances don’t need SSL certificates

- Can change certificates without touching EC2 instances

Using AWS Certificate Manager:

- Request certificate in ACM (must be in same region as ALB)

- Certificate must be for your domain (or use wildcard)

- Select certificate when creating HTTPS listener

- ACM automatically renews certificates

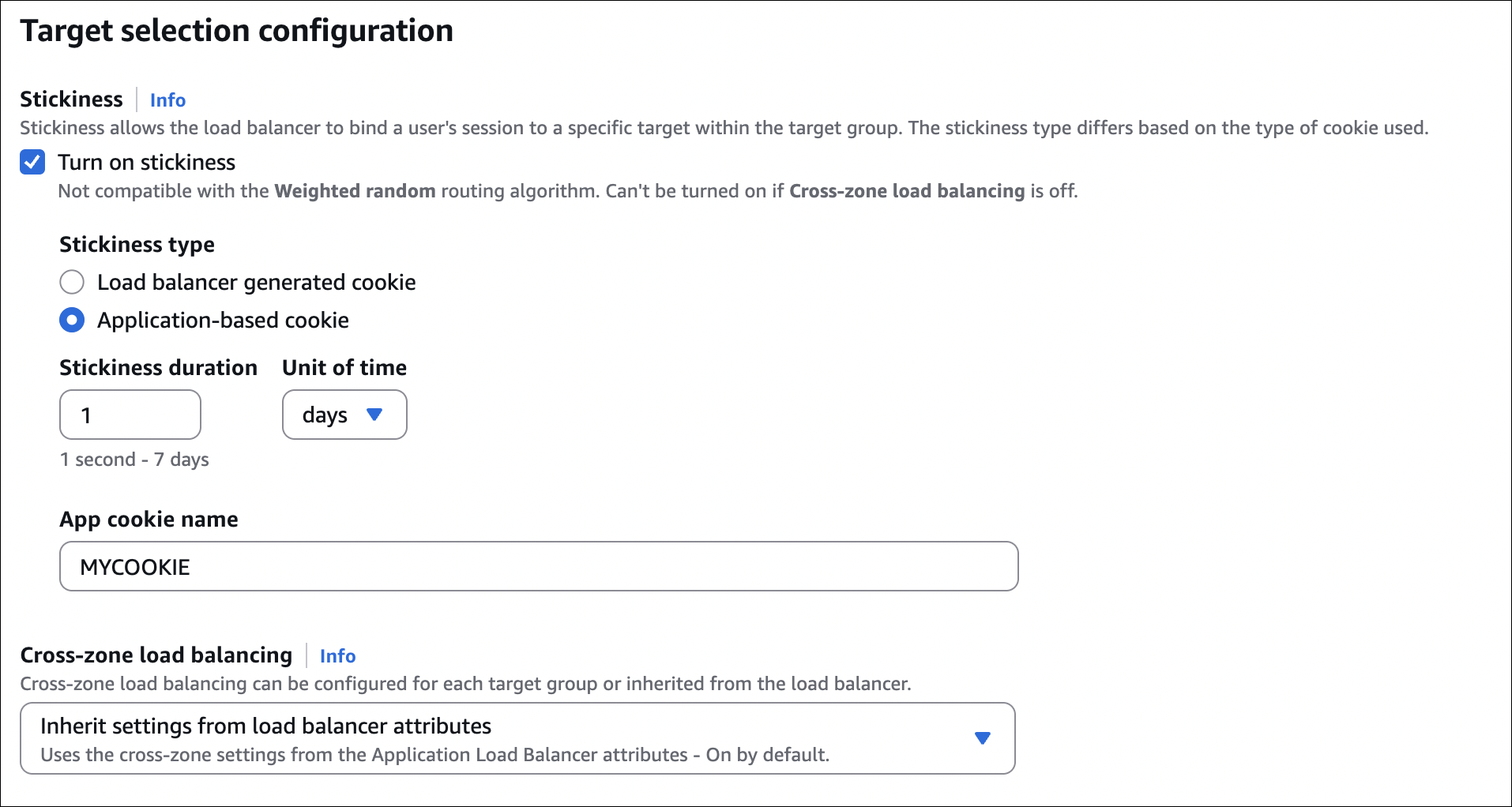

Sticky Sessions (Session Affinity)

By default, ALB distributes requests evenly across targets. But sometimes you need the same user to always go to the same instance (e.g., session stored in memory).

How to enable:

- Enable sticky sessions on target group

- Choose duration (1 second to 7 days)

- ALB sets a cookie (

AWSALB) to track which instance to use

When to use:

- Session data stored in instance memory

- Applications that don’t share sessions between instances

- Legacy applications that require sticky sessions

When NOT to use:

- If you can, use shared session storage (Redis, DynamoDB, etc.)

- Sticky sessions can cause uneven load distribution

- If instance fails, user loses session anyway

Target Types

ALB can route to different types of targets:

| Target Type | Use Case |

|---|---|

| Instances | EC2 instances (most common) |

| IP addresses | On-premises servers, other VPC resources |

| Lambda functions | Serverless applications |

| ECS tasks | Containerized applications |

Instances:

- Register EC2 instances by instance ID

- Instances can be in different AZs

- Health checks verify instance is responding

IP addresses:

- Use for on-premises servers or resources outside VPC

- Must be in same VPC or connected via VPN/Direct Connect

- Health checks work the same way

Lambda functions:

- Route requests to Lambda functions

- No need for EC2 instances

- Pay per request

ECS tasks:

- Route to containers running in ECS

- Target group automatically registers/unregisters tasks

- Useful for containerized microservices

Important Notes

- ALB has DNS name, not static IP: Use DNS name, not IP address

- Multi-AZ by default: ALB automatically distributes across multiple AZs

- Idle timeout: Default 60 seconds (can increase up to 4000 seconds)

- Connection draining: ALB stops sending new requests to deregistering targets, waits for existing connections to finish

- Access logs: Can enable to see all requests (stored in S3)

- Cost: Pay per hour + per LCU (Load Balancer Capacity Unit) used

Hands-On: Creating ALB with EC2 Instances

Let’s create a simple setup: 2 EC2 instances behind an ALB. Each instance will show its hostname so we can see load balancing in action.

Step 1: Create EC2 Instances

Create 2 EC2 instances with the same configuration:

- Go to EC2 → Instances → Launch instances

- Name:

web-server-1(first instance),web-server-2(second instance) - AMI: Amazon Linux 2023

- Instance type: t2.micro (free tier eligible)

- Key pair: Select your key pair (for SSH access)

- Network settings:

- Select your VPC

- Auto-assign public IP: Enable

- Security group: Create new or select existing

- Configure storage: Default (8 GB gp3)

- Advanced details → User data: Paste this script:

1

2

3

4

5

6

7

8

9

10

11

12

13

#!/bin/bash

set -ex

dnf update -y

dnf install -y nginx

echo "<h1>Hostname: $(hostname)</h1>" > /usr/share/nginx/html/index.html

systemctl enable nginx

systemctl start nginx

- Click Launch instance (repeat for second instance)

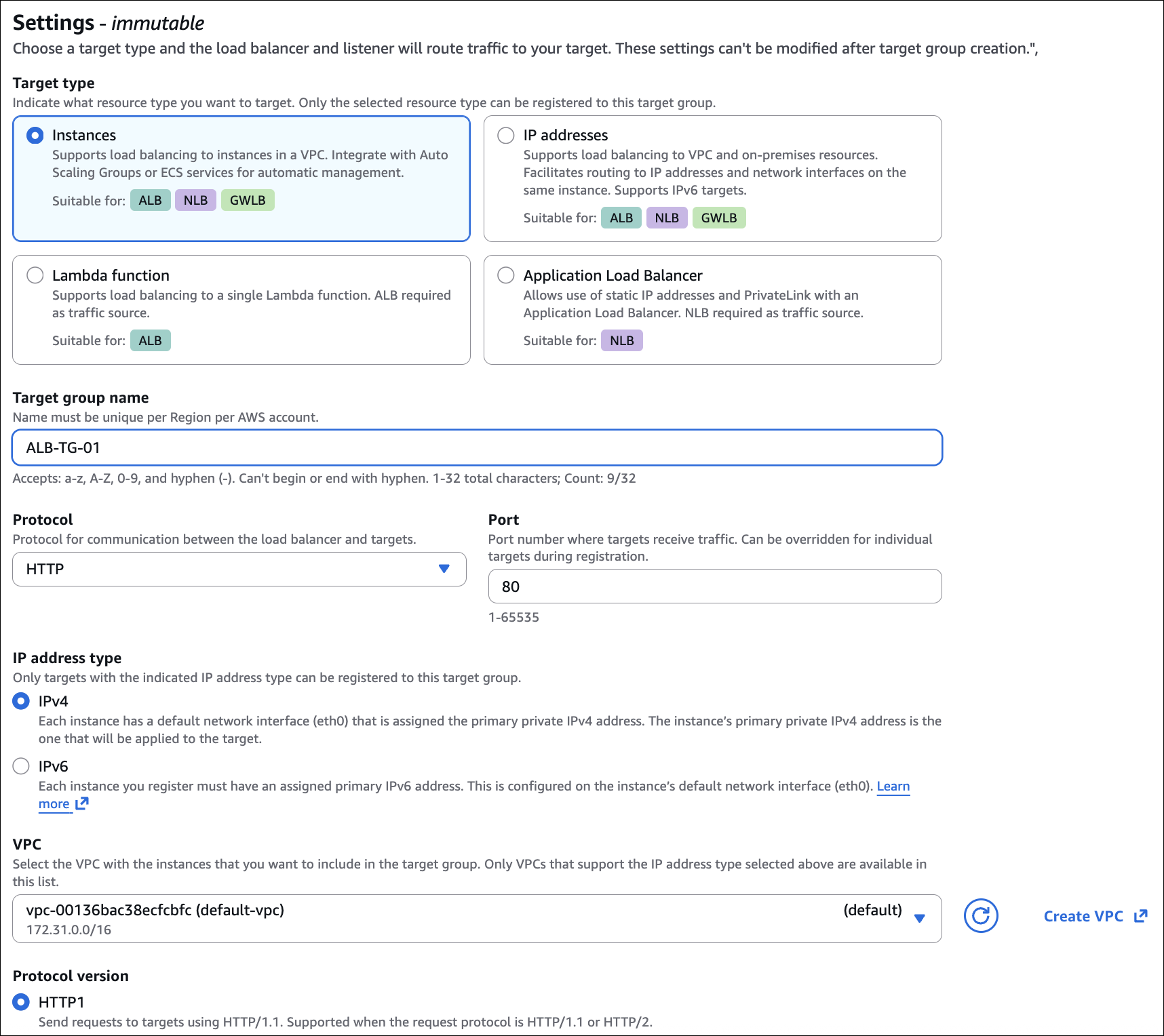

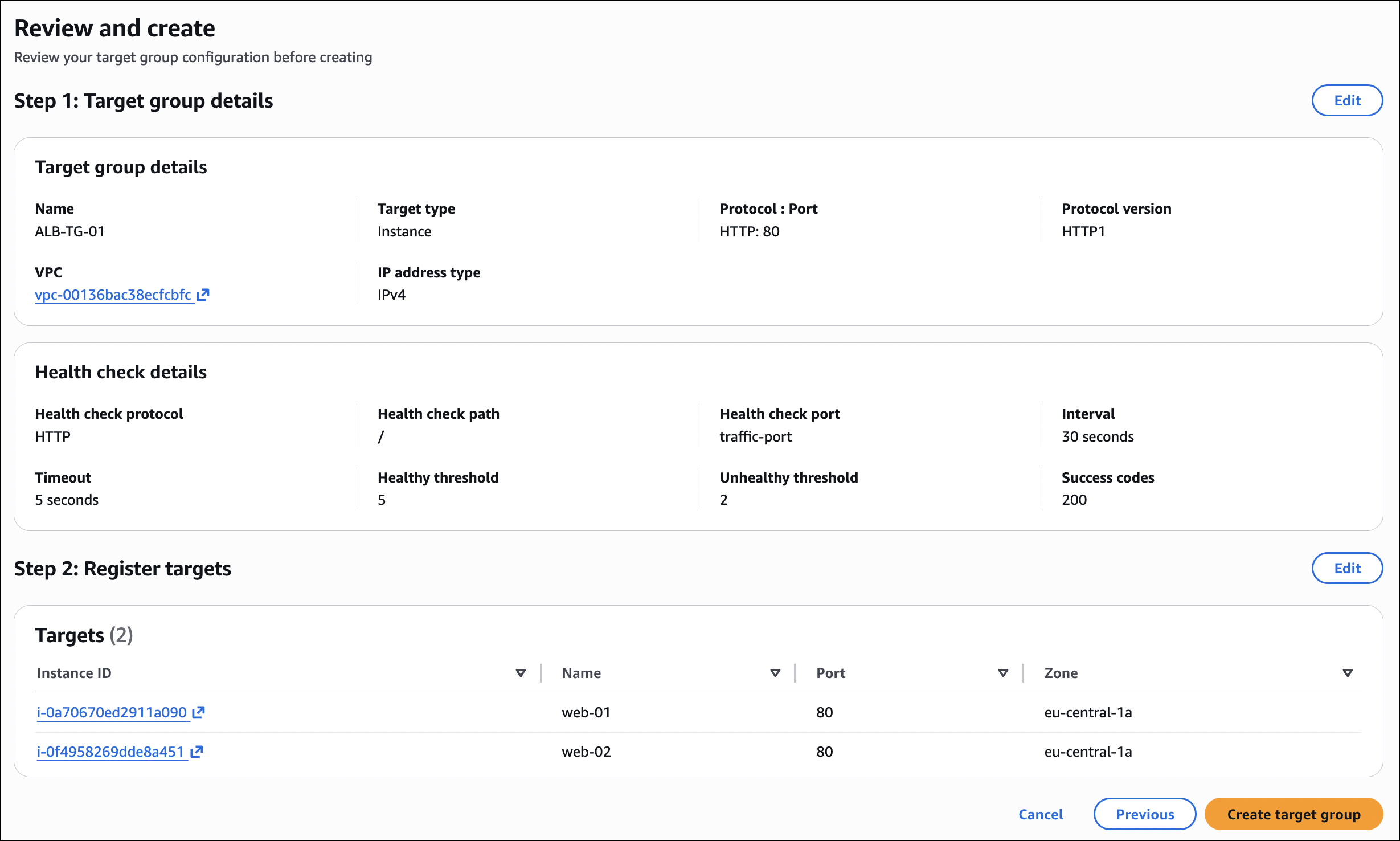

Step 2: Create Target Group

- Go to EC2 → Target Groups → Create target group

- Target type: Instances

- Target group name:

web-servers-tg - Protocol: HTTP

- Port: 80

- VPC: Select your VPC

- Health checks:

- Protocol: HTTP

- Path:

/(or/index.html) - Healthy threshold: 2

- Unhealthy threshold: 2

- Timeout: 5 seconds

- Interval: 30 seconds

- Click Next

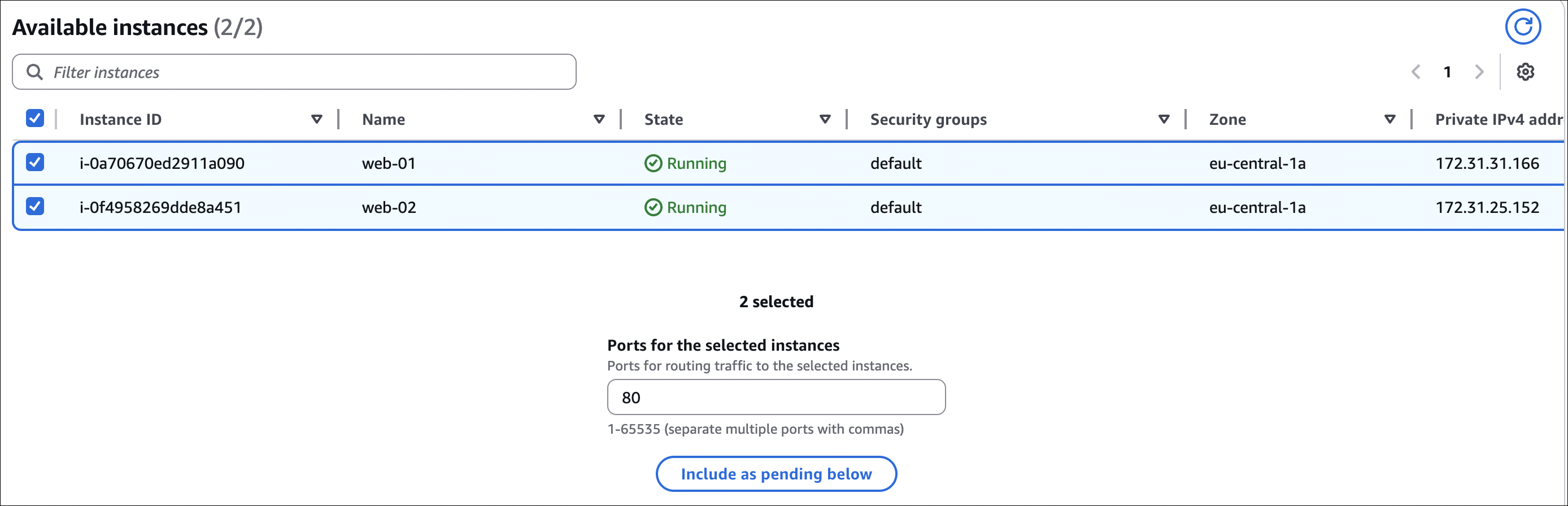

- Register targets:

- Select both EC2 instances (

web-server-1andweb-server-2) - Click Include as pending below

- Click Create target group

- Select both EC2 instances (

Verify targets are healthy:

- Wait a minute or two

- Go to target group → Targets tab

- Both instances should show “healthy” status

- If unhealthy, check security groups and nginx installation

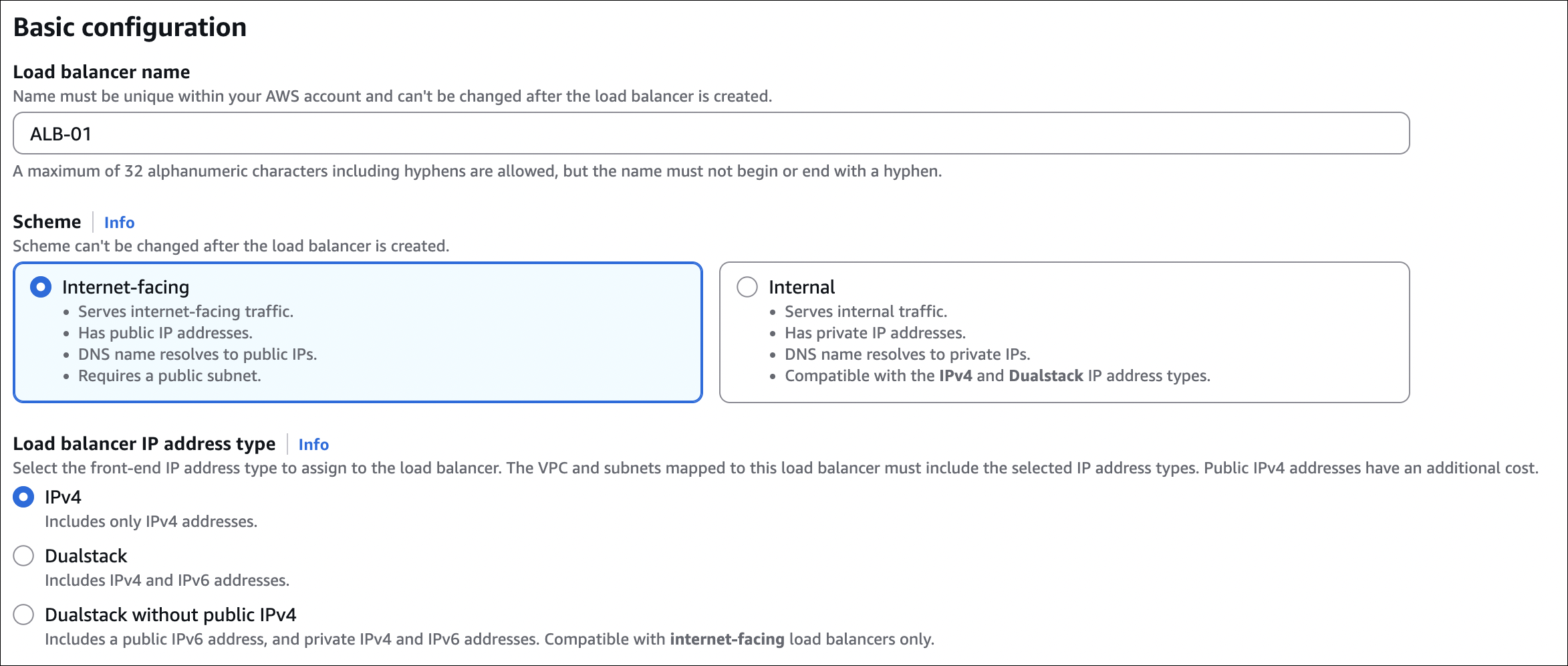

Step 3: Create Application Load Balancer

- Go to EC2 → Load Balancers → Create Load Balancer

- Select Application Load Balancer

- Basic configuration:

- Name:

my-web-alb - Scheme: Internet-facing (or Internal if you want)

- IP address type: IPv4

- Name:

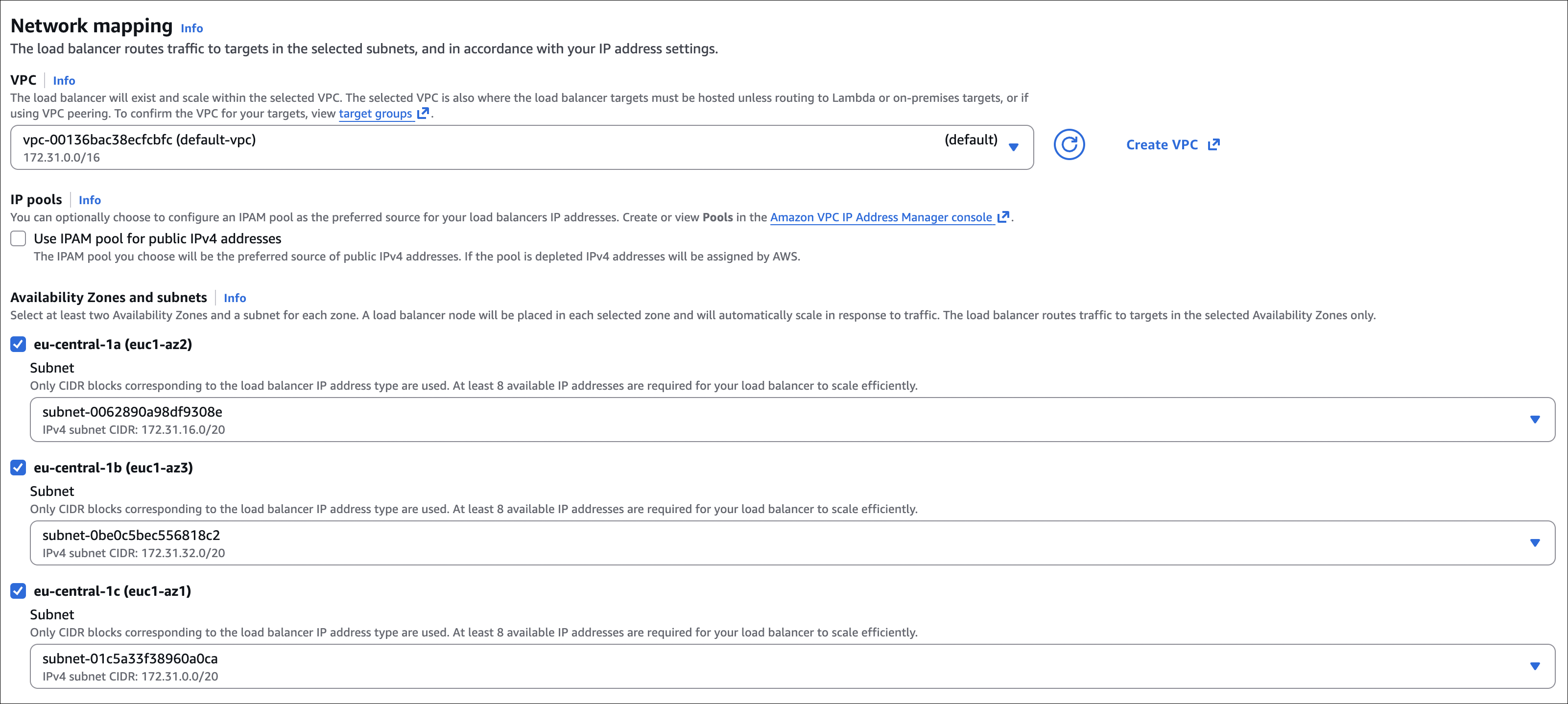

- Network mapping:

- VPC: Select your VPC

- Availability Zones: Select at least 2 AZs (ALB needs multiple AZs)

- Subnets: Select public subnets in each AZ

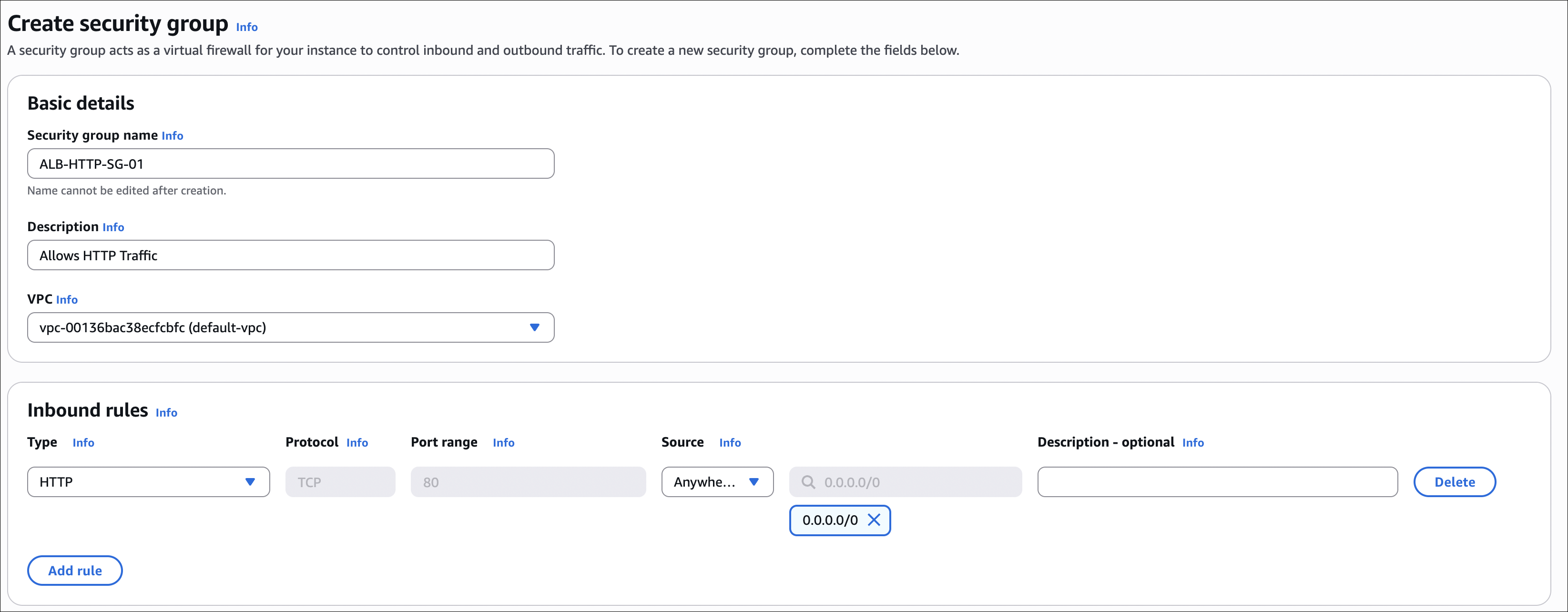

- Security groups:

- Click Create new security group (or select existing)

- Name:

alb-sg - Description: Security group for ALB

- Inbound rules: Add rule

- Type: HTTP

- Source: 0.0.0.0/0 (or your IP for testing)

- Click Create security group

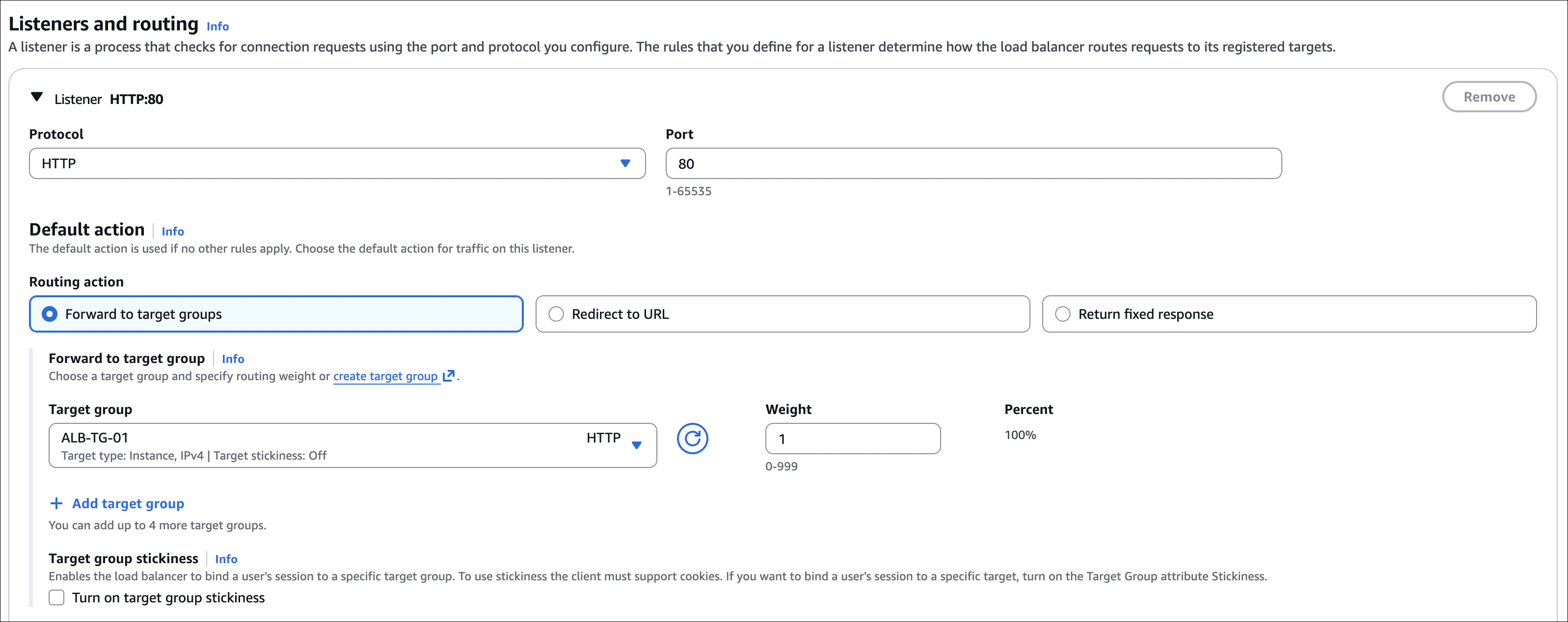

- Listeners and routing:

- Protocol: HTTP

- Port: 80

- Default action: Forward to

web-servers-tg

- Click Create load balancer

Wait for ALB to be active:

- Takes 1-2 minutes

- Status will change from “provisioning” to “active”

- Note the DNS name (e.g.,

my-web-alb-1234567890.us-east-1.elb.amazonaws.com)

Step 4: Test Load Balancing

- Copy the ALB DNS name (e.g.,

alb-01-1635064414.eu-central-1.elb.amazonaws.com) - Open it in your browser:

http://alb-01-1635064414.eu-central-1.elb.amazonaws.com/ - Refresh the page multiple times

- You should see different hostnames from different instances

What you should see:

- First request:

Hostname: ip-172-31-25-152.eu-central-1.compute.internal(from instance 1) - Refresh:

Hostname: ip-172-31-31-166.eu-central-1.compute.internal(from instance 2) - Refresh again: Back to instance 1, and so on

This shows that ALB is distributing traffic across both instances. Each refresh might hit a different instance.

Using curl:

1

curl http://alb-01-1635064414.eu-central-1.elb.amazonaws.com/

Run it multiple times to see different hostnames:

1

2

3

4

5

$ curl http://alb-01-1635064414.eu-central-1.elb.amazonaws.com/

<h1>Hostname: ip-172-31-25-152.eu-central-1.compute.internal</h1>

$ curl http://alb-01-1635064414.eu-central-1.elb.amazonaws.com/

<h1>Hostname: ip-172-31-31-166.eu-central-1.compute.internal</h1>

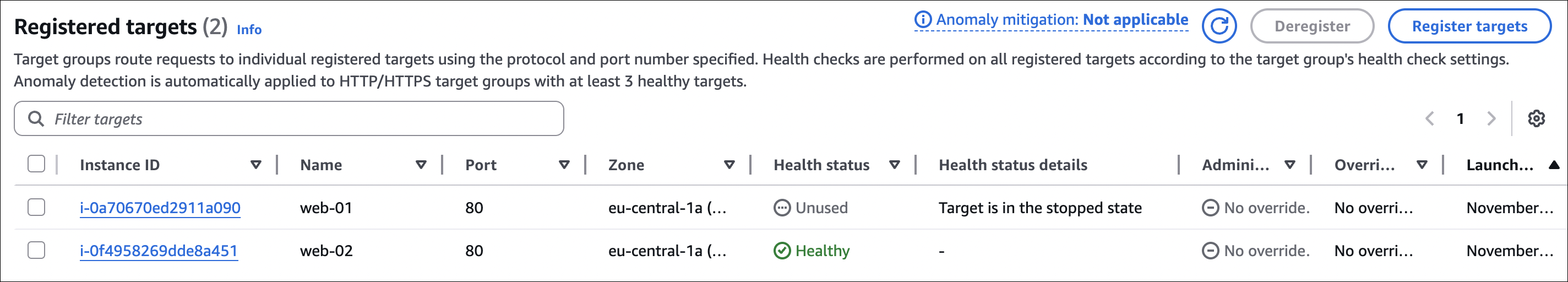

Testing failover (automatic removal of unhealthy targets):

- Check which instance has IP

3.72.64.145(check EC2 console) - Stop that instance: EC2 → Instances → Select instance → Instance state → Stop instance

- Wait 30-60 seconds (ALB health checks need time to detect)

- Check target group: EC2 → Target Groups → web-servers-tg → Targets tab

- The stopped instance should show as “unhealthy” or “draining”

- Make requests to ALB again:

1

2

$ curl http://alb-01-1635064414.eu-central-1.elb.amazonaws.com/

<h1>Hostname: ip-172-31-31-166.eu-central-1.compute.internal</h1>

Now all requests go to the remaining healthy instance (IP 18.156.175.118). ALB automatically removed the stopped instance from rotation.

Step 5: Secure EC2 Instances (Remove Direct Public Access)

Right now, you can still access EC2 instances directly using their public IPs. This is a security risk - we want all traffic to go through the load balancer only.

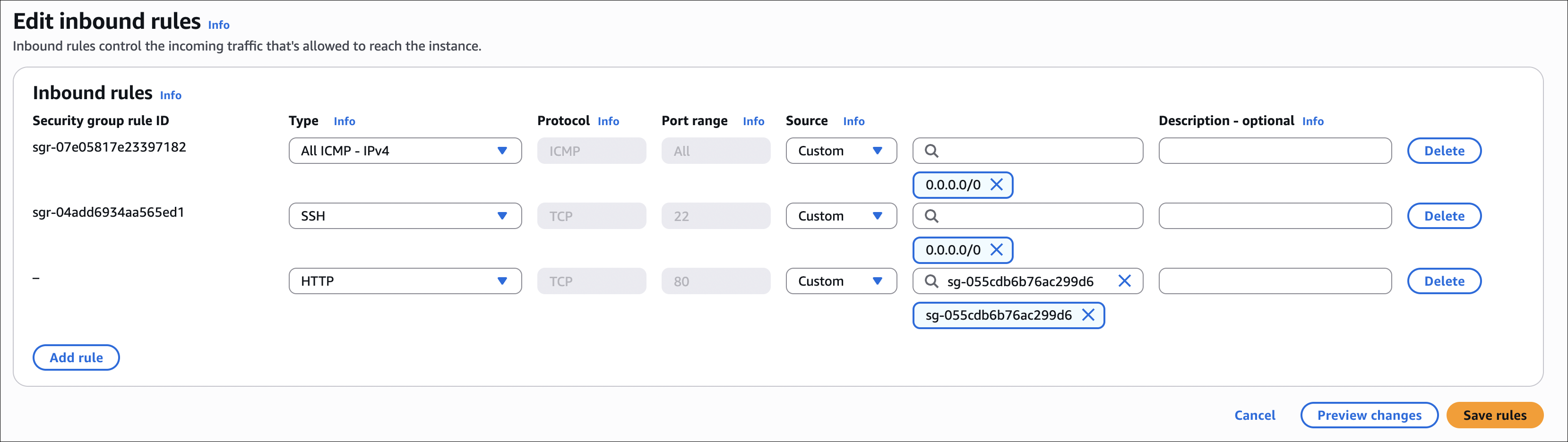

Update EC2 Security Group:

- Go to EC2 → Security Groups

- Find the security group attached to your EC2 instances (check instance details to see which one)

- Select the security group → Inbound rules tab

- Find the HTTP (80) rule that allows traffic from

0.0.0.0/0 - Click Edit inbound rules

- Remove the HTTP rule that allows

0.0.0.0/0 - Add new rule:

- Type: HTTP

- Port: 80

- Source: Select Custom → Choose the ALB security group (

alb-sg)

- Click Save rules

Test it:

- Try accessing instance directly via public IP:

http://3.72.64.145(should fail or timeout) - Try accessing via ALB:

http://alb-01-1635064414.eu-central-1.elb.amazonaws.com/(should work)

Now EC2 instances only accept HTTP traffic from the load balancer. Direct public access is blocked, which is more secure.

What we did:

- Removed public HTTP access (0.0.0.0/0)

- Added rule to allow HTTP only from ALB security group

- Instances are now only accessible through the load balancer

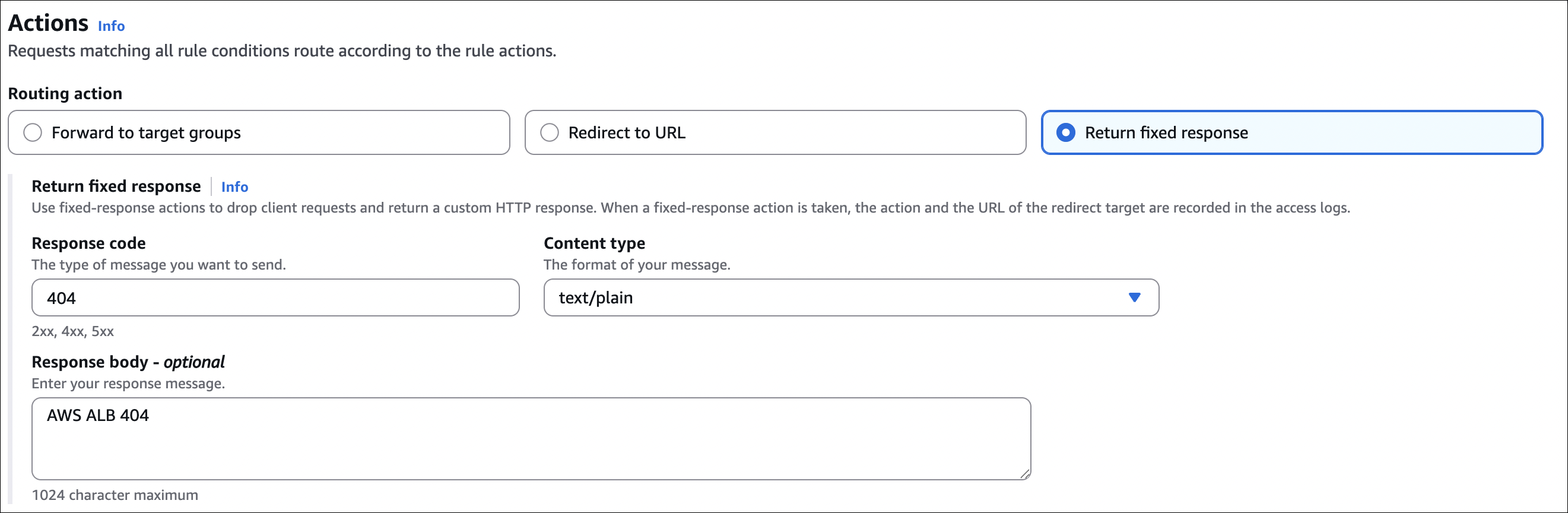

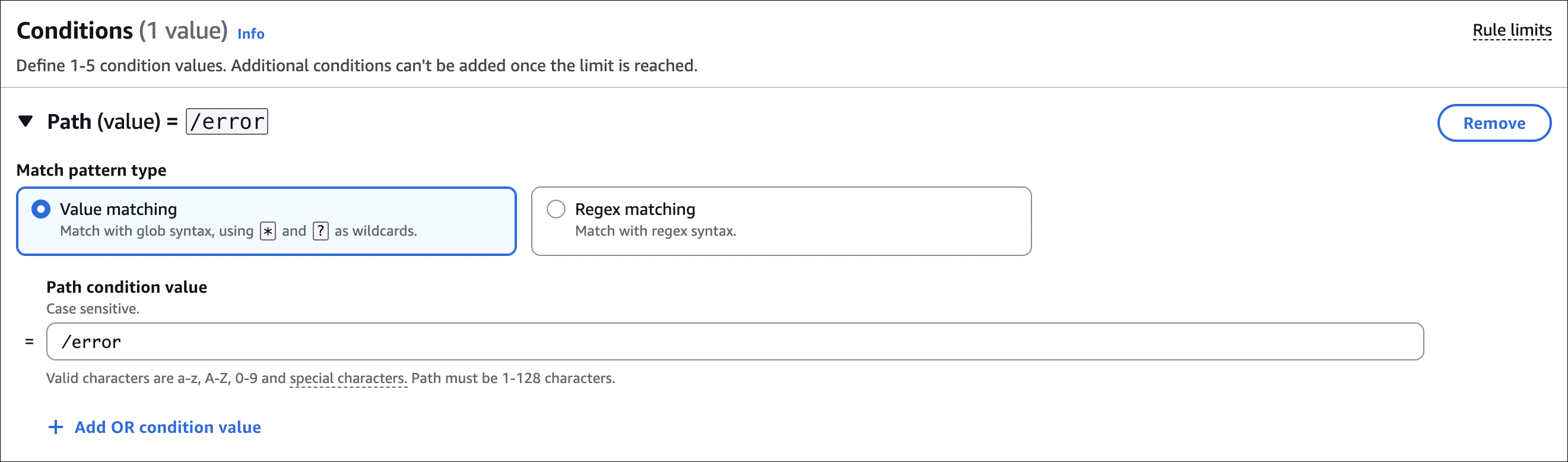

Step 6: Add Listener Rules (Return 404 for Specific Paths)

You can configure ALB to return custom responses (like 404) for specific paths without forwarding to targets. This is useful for blocking certain paths or returning custom error pages.

Example: Return 404 for /error path:

- Go to EC2 → Load Balancers → Select your ALB

- Go to Listeners tab

- Select the listener (HTTP : 80) → Click View/Edit rules

- Click + Add rule (this adds a new rule to the listener)

- Priority: Set a number (e.g., 100) - lower numbers have higher priority

- THEN (actions): First, add the action

- Click Add action

- Select Return fixed response

- Response code:

404 - Response body: Optional - you can add custom HTML or text

- Content type:

text/html(ortext/plain)

- IF (conditions): Then, add the condition

- Click Add condition

- Select Path

- Enter

/error(or/error*to match/errorand/error/anything)

- Click Save

Test it:

1

2

3

4

5

6

7

$ curl http://alb-01-1635064414.eu-central-1.elb.amazonaws.com/error

<html>

<head><title>404 Not Found</title></head>

<body>

<h1>Not Found</h1>

</body>

</html>

The ALB returns 404 directly without forwarding to EC2 instances.

Network Load Balancer (NLB)

So we talked about ALB, which is great for HTTP/HTTPS traffic. But what if you need something faster? Or what if you’re not using HTTP at all? That’s where NLB comes in.

NLB works at Layer 4 (TCP/UDP level). It doesn’t care about HTTP content - it just looks at IP addresses and ports and forwards packets. Think of it like a postal worker who only looks at the address, not the letter inside.

The big difference from ALB:

ALB is smart - it reads your HTTP requests, understands paths and headers, and can route based on content. NLB is fast - it doesn’t read anything, just forwards packets. That’s why NLB can handle millions of requests per second while ALB handles thousands.

When would you use NLB?

Let me give you some scenarios. Say you’re running a gaming server. You need super low latency - every millisecond counts. NLB is perfect for this because it’s much faster than ALB.

Or maybe you need static IP addresses. ALB gives you a DNS name that changes, but NLB gives you actual IP addresses - one per Availability Zone. These IPs never change. This is useful when you need to whitelist IPs in firewalls or third-party systems.

Another cool thing: NLB can preserve the client’s real IP address. With ALB, you get the client IP in the X-Forwarded-For header, but with NLB, the actual source IP is preserved. Your application sees the real client IP, not the load balancer’s IP.

NLB vs ALB - Quick comparison:

| Feature | NLB | ALB |

|---|---|---|

| Layer | Layer 4 (TCP/UDP) | Layer 7 (HTTP/HTTPS) |

| Performance | Millions of requests/sec | Thousands of requests/sec |

| Latency | Ultra-low | Low |

| IP Address | Static IP (one per AZ) | Dynamic DNS name |

| Source IP | Can preserve client IP | X-Forwarded-For header |

| Content-based routing | No | Yes (path, host, headers) |

| Protocols | TCP, UDP, TLS | HTTP, HTTPS |

Static IP addresses - why they matter:

When you create an NLB, AWS gives you one static IP per Availability Zone. For example, if you have 2 AZs, you get 2 IPs. These never change. Unlike ALB where you get a DNS name that points to different IPs, NLB gives you actual IPs you can use.

This is super useful when:

- You need to whitelist IPs in firewalls

- Third-party systems require static IPs

- You have compliance requirements

- You want predictable IPs for your applications

You can even attach Elastic IPs to NLB for even more control.

Preserving source IP:

Here’s something I find useful. With ALB, when a request comes in, your EC2 instance sees the ALB’s IP as the source. The real client IP is in the X-Forwarded-For header, so you need to read that header in your application.

With NLB, you can enable “Preserve client IP addresses” in the target group. When you do this, your EC2 instance sees the actual client IP as the source. No need to read headers - it’s just there. This is great for applications that need the real client IP for logging, security, or geolocation.

What can NLB route to?

Just like ALB, NLB uses target groups. You can register:

- EC2 instances (most common)

- IP addresses (for on-premises servers or other VPC resources)

- Even other ALBs (advanced - you can chain NLB → ALB)

The setup is similar to ALB - create target group, register targets, create listener, point to target group. But remember, NLB doesn’t understand HTTP, so you can’t do path-based routing or host-based routing. It’s just IP and port.

When to use which:

Use NLB when you need extreme performance, static IPs, or you’re using TCP/UDP protocols (not HTTP). Gaming servers, IoT applications, real-time streaming - these are perfect for NLB.

Use ALB when you need content-based routing, working with HTTP/HTTPS, or need features like SSL termination, redirects, or fixed responses. Web applications, microservices, container apps - these work great with ALB.

You can even use both together. Put NLB in front of ALB - NLB handles the TCP layer and forwards to ALB, which handles HTTP routing. This gives you the best of both worlds.

Hands-On: Creating NLB with Existing EC2 Instances

Let’s create an NLB using the same EC2 instances we used for ALB. This will show you how NLB works and how it’s different from ALB.

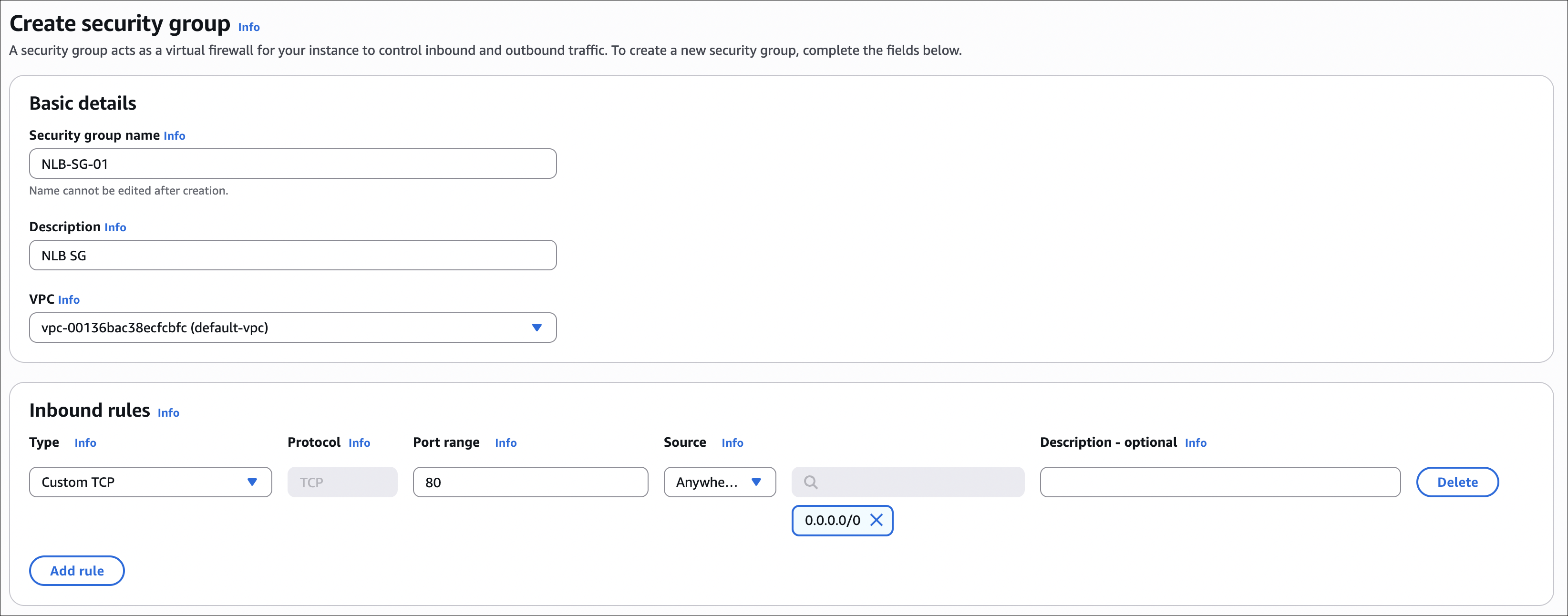

Step 1: Create NLB Security Group

First, we need a security group for the NLB that allows TCP traffic on port 80.

- Go to EC2 → Security Groups → Create security group

- Name:

nlb-sg - Description: Security group for NLB

- Inbound rules: Add rule

- Type: Custom TCP

- Port: 80

- Source: 0.0.0.0/0 (or your IP for testing)

- Click Create security group

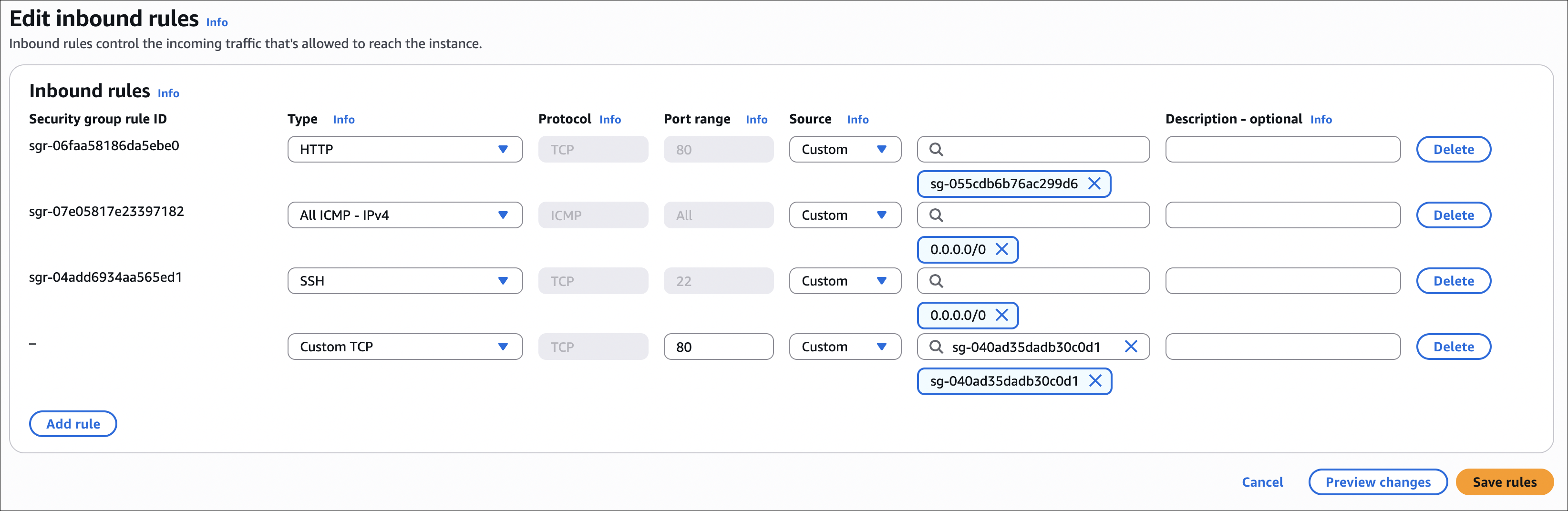

Step 2: Update EC2 Security Groups

Now we need to update the EC2 instances’ security groups to allow TCP traffic from the NLB security group.

- Go to EC2 → Security Groups

- Find the security group attached to your EC2 instances

- Select it → Inbound rules tab

- Click Edit inbound rules

- Add new rule:

- Type: Custom TCP

- Port: 80

- Source: Select Custom → Choose the NLB security group (

nlb-sg)

- Click Save rules

Make sure you allow TCP 80 from NLB security group, not just HTTP. NLB works at TCP level, so it needs TCP rules.

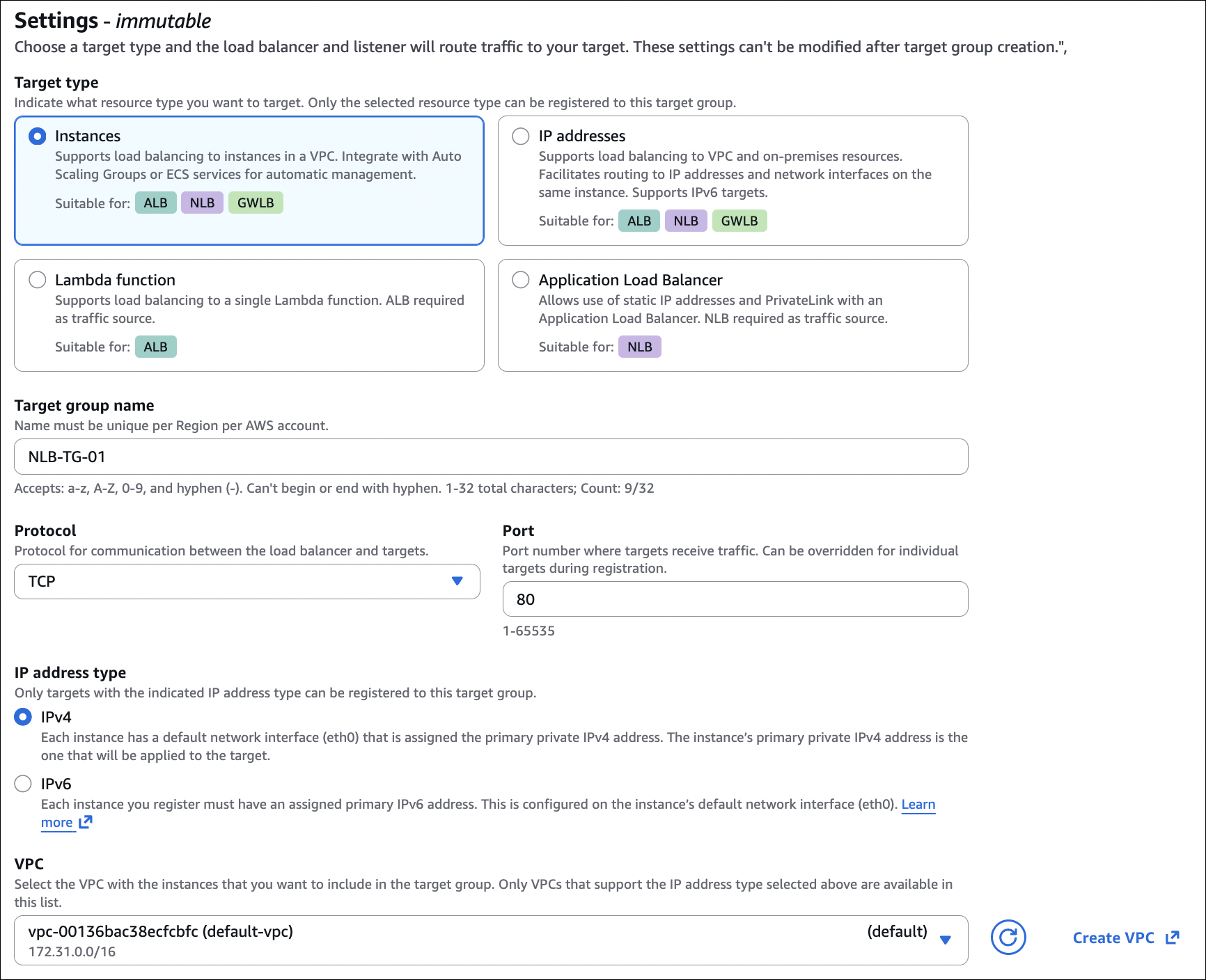

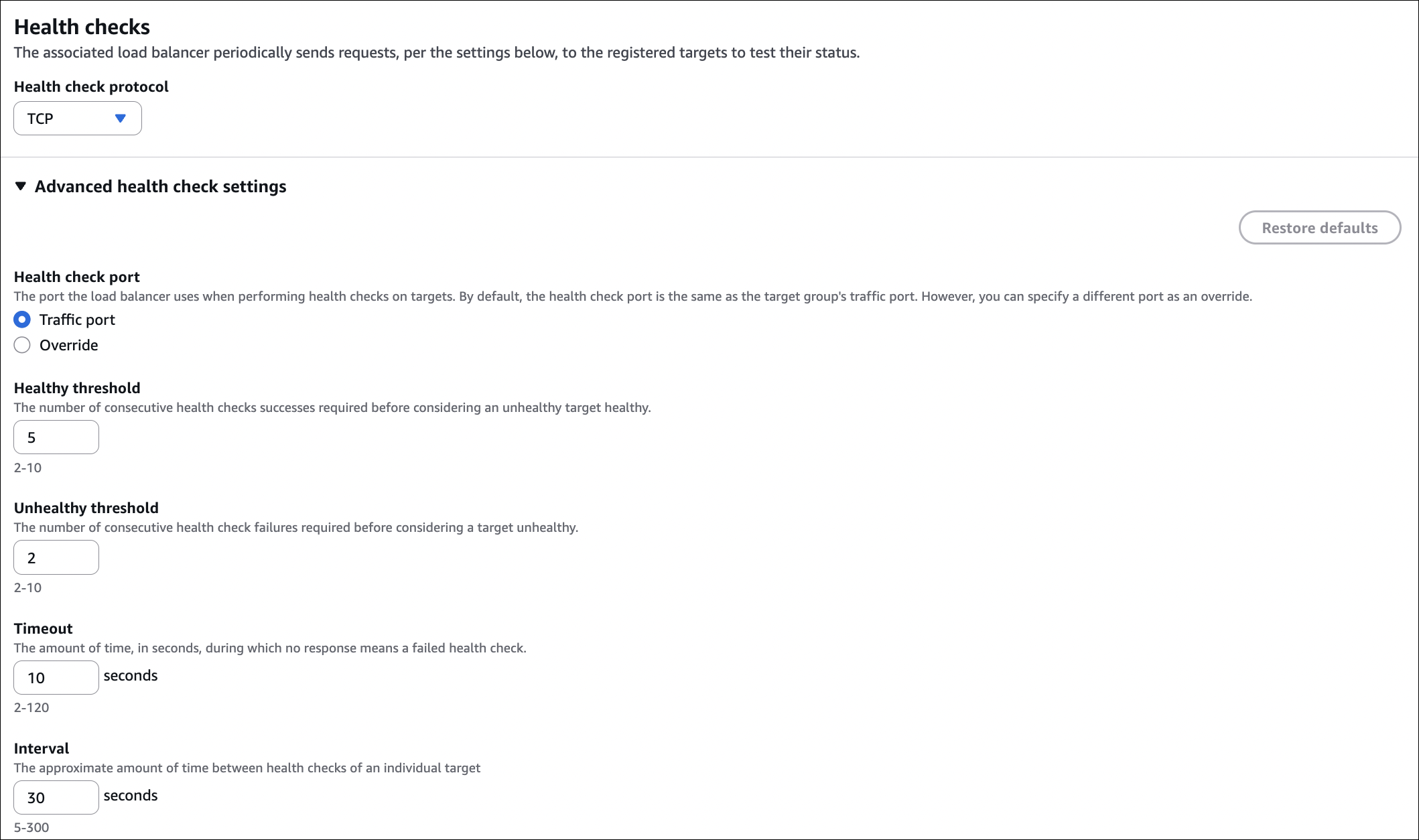

Step 3: Create Target Group

- Go to EC2 → Target Groups → Create target group

- Target type: Instances

- Target group name:

web-servers-nlb-tg - Protocol: TCP

- Port: 80

- VPC: Select your VPC

- Health checks:

- Protocol: TCP (or HTTP if you want to check HTTP response)

- Port: 80

- Healthy threshold: 2

- Unhealthy threshold: 2

- Timeout: 10 seconds

- Interval: 30 seconds

- Click Next

- Register targets:

- Select both EC2 instances (

web-server-1andweb-server-2) - Click Include as pending below

- Click Create target group

- Select both EC2 instances (

Step 4: Create Network Load Balancer

- Go to EC2 → Load Balancers → Create Load Balancer

- Select Network Load Balancer

- Basic configuration:

- Name:

my-web-nlb - Scheme: Internet-facing (or Internal if you want)

- IP address type: IPv4

- Name:

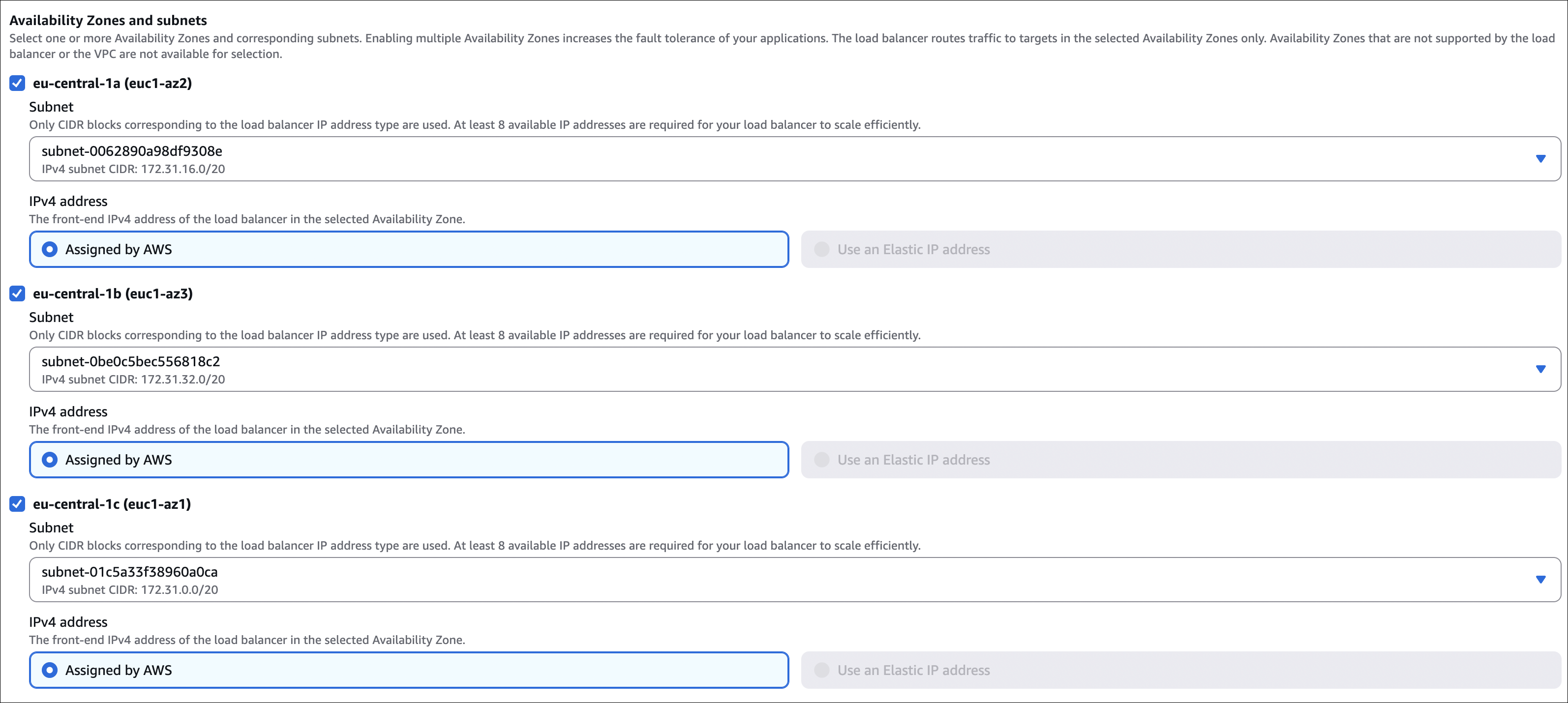

- Network mapping:

- VPC: Select your VPC

- Availability Zones: Select at least 2 AZs (NLB needs multiple AZs)

- Subnets: Select public subnets in each AZ

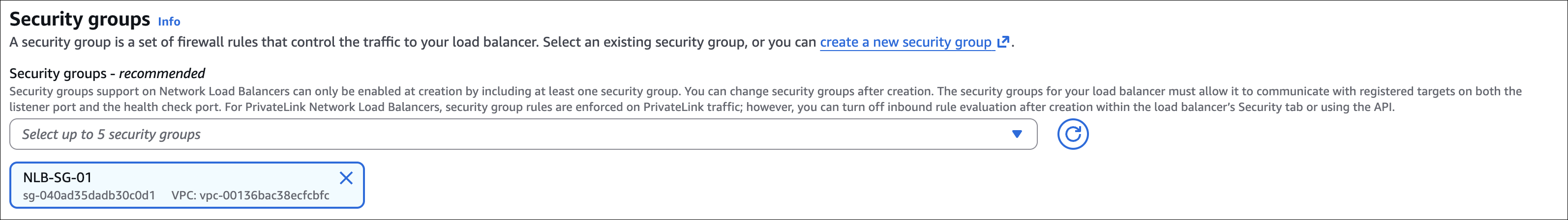

- Security groups:

- Select

nlb-sg(the one we created)

- Select

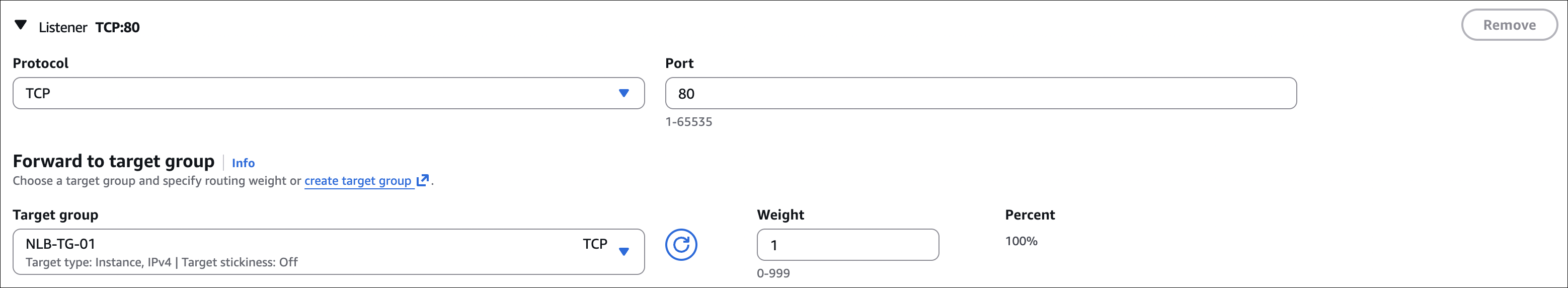

- Listeners and routing:

- Protocol: TCP

- Port: 80

- Default action: Forward to

web-servers-nlb-tg

- Click Create load balancer

Wait for NLB to be active:

- Takes 1-2 minutes

- Status will change from “provisioning” to “active”

- Note the DNS name and static IP addresses (one per AZ)

Step 5: Test NLB

- Copy the NLB DNS name (e.g.,

my-web-nlb-1234567890.elb.eu-central-1.amazonaws.com) - Make requests using the DNS name or static IP addresses:

1

2

3

4

5

$ curl http://my-web-nlb-1234567890.elb.eu-central-1.amazonaws.com/

<h1>Hostname: ip-172-31-25-152.eu-central-1.compute.internal</h1>

$ curl http://my-web-nlb-1234567890.elb.eu-central-1.amazonaws.com/

<h1>Hostname: ip-172-31-31-166.eu-central-1.compute.internal</h1>

You should see different hostnames, just like with ALB. But remember, NLB is working at TCP level - it doesn’t understand HTTP, it just forwards TCP packets.

Using static IP addresses:

You can also use the static IP addresses directly:

1

2

$ curl http://203.0.113.10/

<h1>Hostname: ip-172-31-25-152.eu-central-1.compute.internal</h1>

These IPs never change, unlike ALB’s DNS name.

What’s different from ALB:

- NLB uses TCP protocol (not HTTP/HTTPS in listener)

- Security groups use TCP rules (not HTTP)

- You get static IP addresses (one per AZ)

- No content-based routing (can’t route by path or host)

- Much faster performance

Testing failover:

Just like with ALB, if you stop one instance, NLB will automatically route traffic to the healthy instance. Health checks work the same way.

Gateway Load Balancer (GWLB)

Now here’s something different. GWLB is not for your regular web applications. It’s specifically designed for network security appliances - things like firewalls, intrusion detection systems, or deep packet inspection tools.

Think of it this way: ALB and NLB route traffic to your applications. GWLB routes traffic through security appliances first, then to your applications. It’s like a security checkpoint that all your traffic must pass through.

The problem it solves:

Imagine you have a firewall or security appliance that needs to inspect all your traffic. Traditionally, you’d put it in the network path - but what if you need to scale it? What if traffic increases and your single firewall can’t handle it? You’d need to manually add more firewalls and configure routing. That’s a pain.

GWLB solves this by automatically distributing traffic across multiple security appliances. You deploy your firewall (or whatever security tool) as a target, and GWLB sends traffic to it. If you need more capacity, just add more firewall instances - GWLB handles the load balancing automatically.

How it works:

GWLB works at Layer 3 (IP level). It uses something called GENEVE protocol to encapsulate traffic and send it to your security appliances. The security appliance inspects the traffic, then sends it back to GWLB, which forwards it to the final destination.

Here’s the flow:

- Traffic comes in to GWLB

- GWLB encapsulates it and sends to security appliance (firewall, IDS, etc.)

- Security appliance inspects/processes the traffic

- Security appliance sends it back to GWLB

- GWLB forwards to final destination (your application)

It’s transparent to your applications - they don’t know traffic went through a security appliance.

What makes it special:

Unlike ALB and NLB, GWLB is designed to work with third-party security appliances. You can use appliances from vendors like Palo Alto, Check Point, Fortinet, or even build your own using EC2 instances.

The key feature is that it preserves the original source and destination IPs. Your security appliance sees the real traffic, not some modified version. This is important because security tools often need to see the actual source IP for threat detection.

GWLB vs other load balancers:

| Feature | GWLB | ALB | NLB |

|---|---|---|---|

| Layer | Layer 3 (IP) | Layer 7 (HTTP) | Layer 4 (TCP/UDP) |

| Purpose | Security appliances | Web applications | High performance |

| Protocol | GENEVE | HTTP/HTTPS | TCP/UDP |

| Targets | Security appliances | EC2, Lambda, etc. | EC2, IPs |

| Use case | Firewall, IDS, DPI | Web apps, APIs | Gaming, IoT |

Real-world example:

Let’s say you’re running a web application and you want all traffic to go through a firewall first. You’d:

- Deploy firewall appliances (maybe 2-3 instances for redundancy)

- Create GWLB target group and register firewall instances

- Create GWLB

- Configure route tables so traffic to your web servers goes through GWLB

- GWLB sends traffic to firewalls, firewalls inspect it, send it back, GWLB forwards to web servers

Now all your traffic is inspected by firewalls, and if you need more capacity, just add more firewall instances - GWLB handles the rest.

Sticky Sessions (Session Affinity)

This is a feature available in ALB (and some other load balancers). Let me explain what it is and when you might need it.

What are sticky sessions?

By default, load balancers distribute requests evenly across all healthy instances. User A’s first request goes to instance 1, second request goes to instance 2, third request goes to instance 1 again, and so on. This is great for load distribution, but sometimes it causes problems.

Imagine you have a shopping cart application. User adds items to cart, and the cart data is stored in the instance’s memory (not in a database or shared storage). If the first request goes to instance 1 and stores cart data there, but the second request goes to instance 2, the user won’t see their cart items. That’s a problem.

Sticky sessions solve this by ensuring the same user always goes to the same instance. Once a user hits instance 1, all their subsequent requests go to instance 1. The load balancer “sticks” the user to that instance.

How it works:

When you enable sticky sessions, the load balancer sets a cookie in the user’s browser. This cookie contains information about which instance to route to. When the user makes another request, the load balancer reads the cookie and sends them to the same instance.

In ALB:

ALB calls it “session affinity” and it’s configured at the target group level. You enable it, set a duration (1 second to 7 days), and ALB automatically sets a cookie called AWSALB. The cookie expires after the duration you set.

The problem with sticky sessions:

While sticky sessions solve the immediate problem, they create other issues:

- Uneven load distribution: If one user makes 1000 requests and another makes 10, the first user’s instance gets all the load

- Instance failure: If the instance a user is stuck to fails, they lose their session anyway

- Scaling issues: When you add or remove instances, some users need to be redistributed

How to enable in ALB:

- Go to your target group

- Go to Attributes tab

- Click Edit

- Enable Stickiness

- Set duration (1 second to 7 days)

- Save

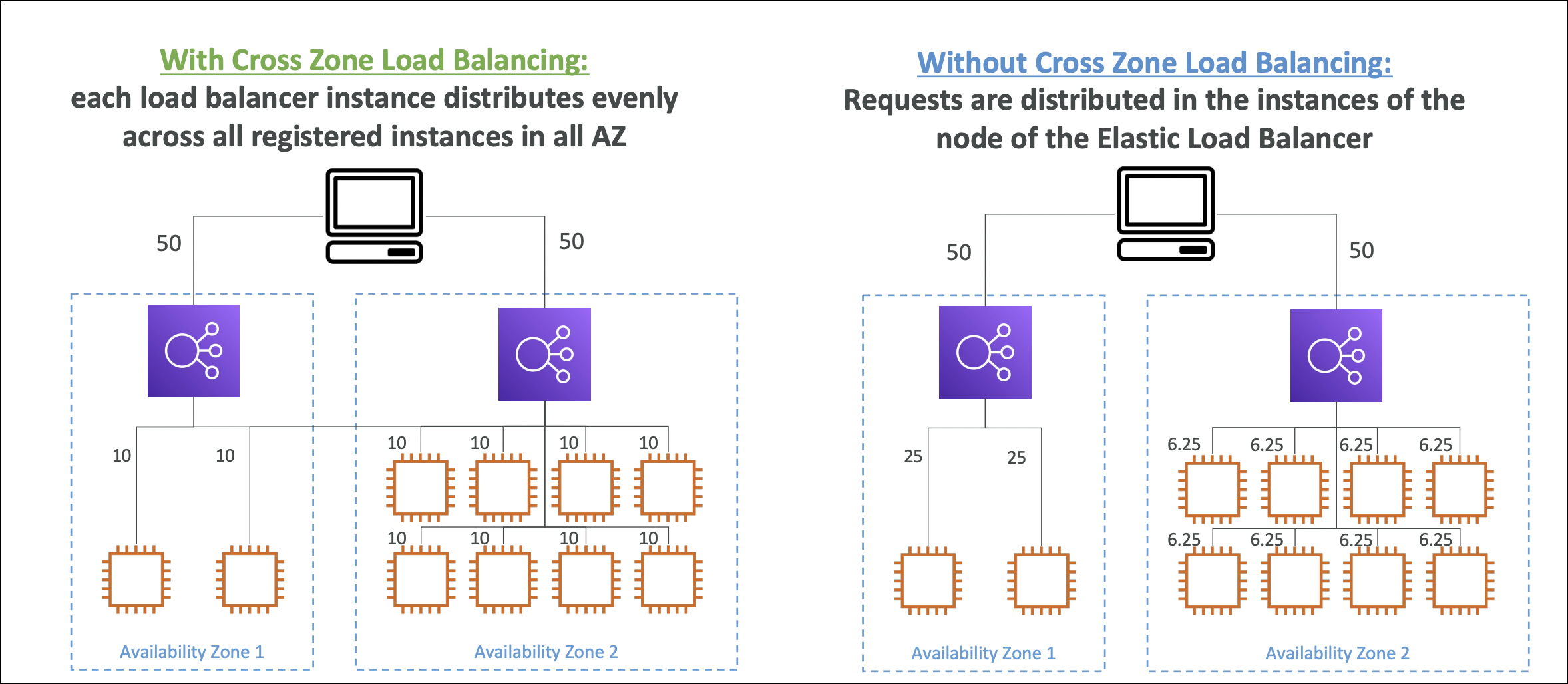

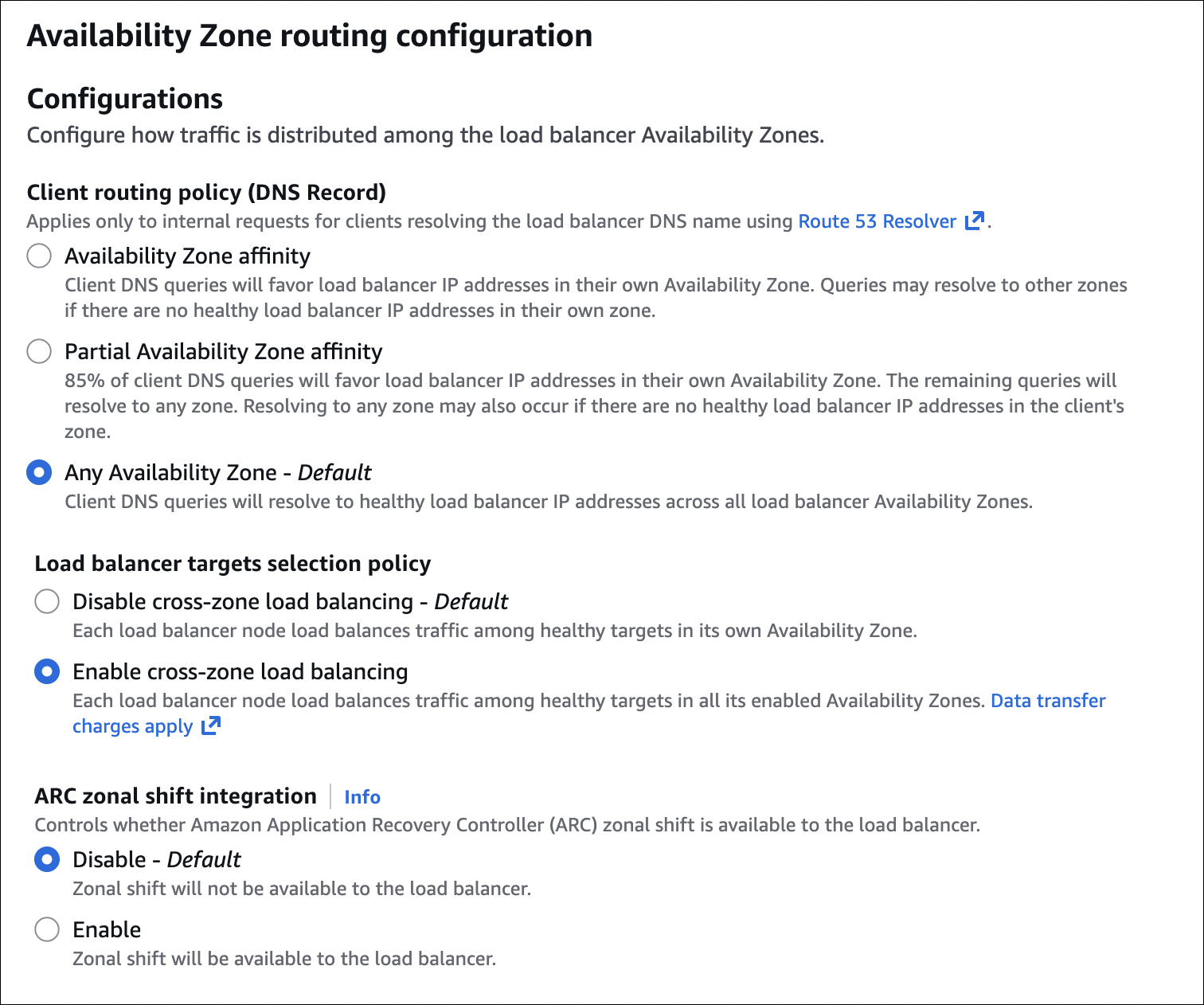

Cross-Zone Load Balancing

This is an important setting that affects how traffic is distributed across Availability Zones. Let me explain with a concrete example.

The scenario:

Let’s say you have a load balancer with instances in 2 Availability Zones:

- AZ-1: 2 EC2 instances

- AZ-2: 8 EC2 instances

- Total: 10 instances

Now, how does the load balancer distribute traffic? It depends on whether cross-zone load balancing is enabled or not.

Cross-Zone Load Balancing: DISABLED (default for NLB)

When cross-zone is disabled, the load balancer distributes traffic evenly across Availability Zones first, then within each AZ.

Here’s how it works:

- Load balancer splits traffic 50/50 between AZ-1 and AZ-2 (because there are 2 AZs)

- AZ-1 gets 50% of traffic → distributes to 2 instances → each instance gets 25% of total traffic

- AZ-2 gets 50% of traffic → distributes to 8 instances → each instance gets 6.25% of total traffic

Result:

- AZ-1 instances: 25% traffic each (2 instances handling 50% total)

- AZ-2 instances: 6.25% traffic each (8 instances handling 50% total)

This is uneven! The 2 instances in AZ-1 are handling way more traffic than the 8 instances in AZ-2. Not ideal.

Cross-Zone Load Balancing: ENABLED (default for ALB)

When cross-zone is enabled, the load balancer distributes traffic evenly across all instances, regardless of which AZ they’re in.

Here’s how it works:

- Load balancer sees 10 total instances

- Distributes traffic evenly: 100% ÷ 10 instances = 10% per instance

- Doesn’t care which AZ the instance is in

Result:

- AZ-1 instances: 10% traffic each (2 instances handling 20% total)

- AZ-2 instances: 10% traffic each (8 instances handling 80% total)

This is even! All instances get the same amount of traffic, regardless of AZ. Much better.

Why does this matter?

In our example, with cross-zone disabled, the 2 instances in AZ-1 are overloaded while the 8 instances in AZ-2 are underutilized. This is inefficient and can cause performance issues.

With cross-zone enabled, all instances share the load equally. This is more efficient and better utilizes your resources.

The trade-off:

Cross-zone load balancing requires traffic to cross AZ boundaries. This means:

- Slightly higher latency (traffic goes between AZs)

- Slightly higher data transfer costs (cross-AZ data transfer is charged)

But for most applications, the benefits (even load distribution, better resource utilization) outweigh these minor costs.

Default behavior:

- ALB: Cross-zone load balancing is enabled by default (can’t disable it)

- NLB: Cross-zone load balancing is disabled by default (can enable it)

How to enable in NLB:

- Go to your Network Load Balancer

- Go to Description tab

- Click Edit attributes

- Enable Cross-zone load balancing

- Save

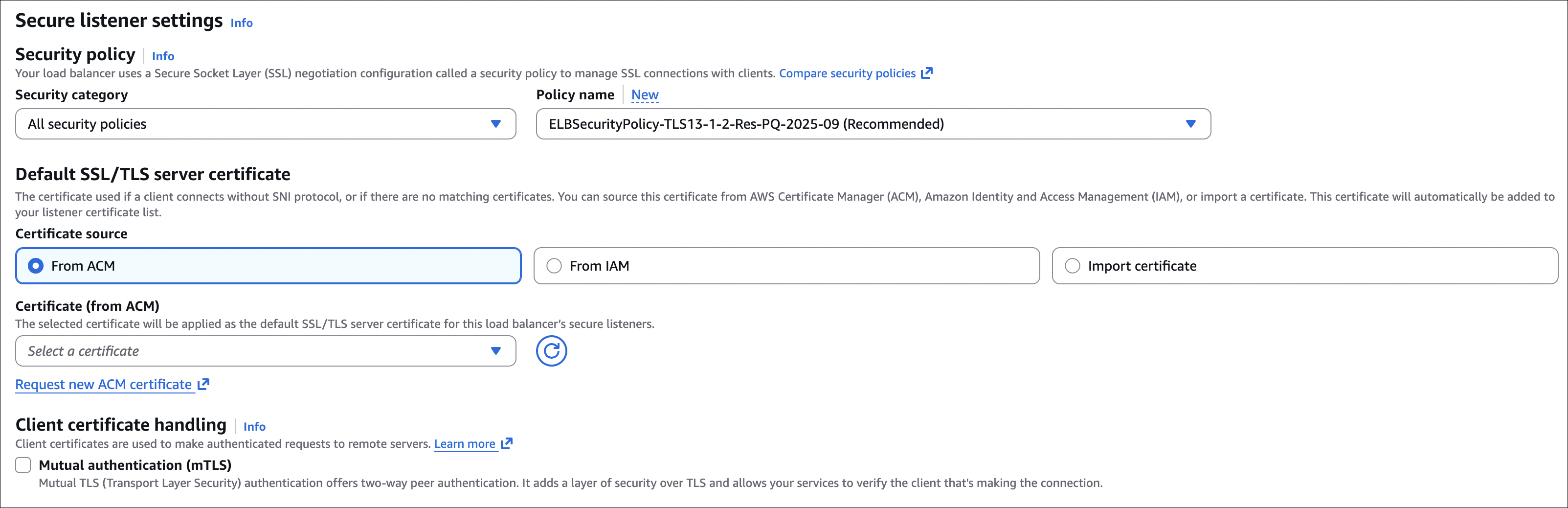

Adding SSL/HTTPS to Existing Load Balancers

You’ve created your load balancer with HTTP (port 80), but now you want to add HTTPS (port 443) for secure connections. Here’s how to do it for both ALB and NLB.

Prerequisites: SSL Certificate

First, you need an SSL certificate. You have two options:

Option 1: AWS Certificate Manager (ACM) - Recommended

- Free SSL certificates

- Automatic renewal

- Must be in the same region as your load balancer

- Can only use certificates from ACM in the same region

Option 2: Import your own certificate

- Upload your own certificate (from another CA or self-signed)

- You manage renewal

- Can import from any region

For most cases, ACM is the way to go. It’s free and AWS handles everything.

How to request certificate in ACM:

- Go to Certificate Manager → Request certificate

- Choose Request a public certificate

- Enter your domain name (e.g.,

example.comor*.example.comfor wildcard) - Choose validation method (DNS or Email)

- Complete validation (add DNS record or click email link)

- Wait for certificate to be issued (usually a few minutes)

Adding HTTPS Listener to ALB

Let’s add an HTTPS listener to your existing ALB:

- Go to EC2 → Load Balancers → Select your ALB

- Go to Listeners tab

- Click Add listener

- Protocol: HTTPS

- Port: 443

- Default actions:

- Action type: Forward to

- Target group: Select your existing target group (same one used for HTTP)

- Default SSL certificate:

- From ACM: Select your certificate from the dropdown

- Or From IAM: If you imported a certificate to IAM

- Click Add

That’s it! Your ALB now accepts HTTPS traffic on port 443.

What happens:

- Users can access your application via

https://your-alb-dns-name - Traffic is encrypted between client and ALB

- ALB decrypts and forwards plain HTTP to your EC2 instances (unless you configure HTTPS to instances)

- Your EC2 instances don’t need SSL certificates

Redirect HTTP to HTTPS (optional but recommended):

You probably want to redirect all HTTP traffic to HTTPS:

- Go to Listeners tab

- Select your HTTP listener (port 80)

- Click Edit

- Default action: Change from “Forward to” to Redirect to

- Protocol: HTTPS

- Port: 443

- Status code: 301 (Permanent redirect)

- Click Update

Now all HTTP requests automatically redirect to HTTPS.

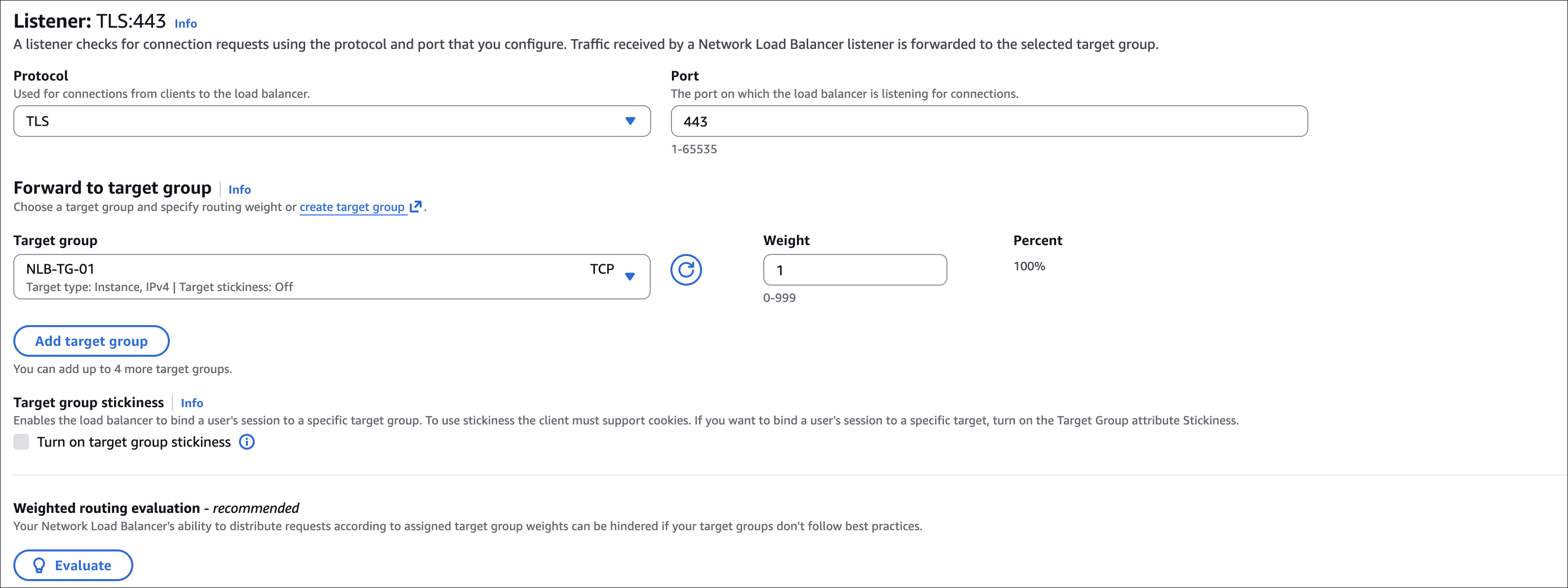

Adding TLS Listener to NLB

For NLB, the process is similar but uses TLS instead of HTTPS:

- Go to EC2 → Load Balancers → Select your NLB

- Go to Listeners tab

- Click Add listener

- Protocol: TLS

- Port: 443

- Default actions:

- Action type: Forward to

- Target group: Select your existing target group

- Default SSL certificate:

- From ACM: Select your certificate

- Or From IAM: If you imported a certificate

- Click Add

Important difference:

NLB uses TLS protocol (not HTTPS). TLS is the underlying protocol that HTTPS uses. For web applications, this works the same way - users access via https://your-nlb-dns-name and traffic is encrypted.

Security Group Updates

Don’t forget to update your security groups:

ALB Security Group:

- Add inbound rule: HTTPS (443) from 0.0.0.0/0 (or your IP)

NLB Security Group:

- Add inbound rule: Custom TCP (443) from 0.0.0.0/0 (or your IP)

EC2 Security Groups:

- No changes needed (they still receive HTTP/TCP from load balancer)

Testing HTTPS

After adding the listener, test it:

1

2

$ curl https://your-alb-dns-name/

<h1>Hostname: ip-172-31-25-152.eu-central-1.compute.internal</h1>

Or open in browser - you should see the padlock icon indicating secure connection.

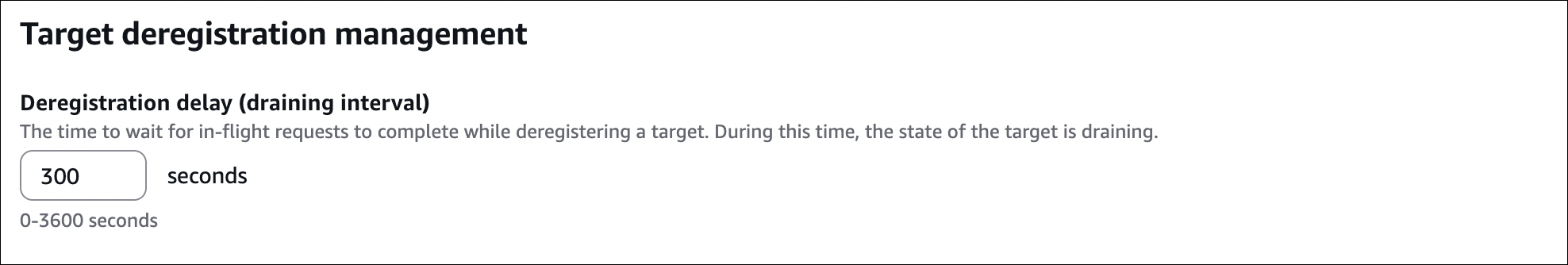

Deregistration Delay (Connection Draining)

When you remove an instance from a target group (or it becomes unhealthy), the load balancer needs to stop sending new requests to it. But what about requests that are already in progress? That’s where deregistration delay comes in.

What is deregistration delay?

Deregistration delay (also called connection draining) is the time the load balancer waits before completely removing an instance from the target group. During this time:

- No new requests are sent to the instance

- Existing requests are allowed to complete

- Health checks continue (if instance becomes healthy again, it’s added back)

This prevents users from experiencing errors when an instance is being removed or updated.

How it works:

Let’s say you have an instance handling requests. You want to remove it from the target group (maybe for maintenance or scaling down). Here’s what happens:

- You deregister the instance (or it becomes unhealthy)

- Load balancer immediately stops sending new requests to it

- Deregistration delay timer starts (default: 300 seconds / 5 minutes)

- Existing requests continue to be processed

- If all requests complete before the delay expires, instance is removed immediately

- If delay expires and requests are still active, instance is removed anyway (requests may fail)

Default values:

- ALB: 300 seconds (5 minutes)

- NLB: 300 seconds (5 minutes)

- Range: 0 to 3600 seconds (0 to 60 minutes)

Setting to 0 seconds:

If you set deregistration delay to 0:

- Instance is removed immediately

- Active requests are terminated

- Not recommended for production (can cause errors)

How to configure:

In Target Group:

- Go to EC2 → Target Groups → Select your target group

- Go to Attributes tab

- Click Edit

- Find Deregistration delay

- Set value (0 to 3600 seconds)

- Click Save

Real-world example:

Let’s say you’re running a web application that generates PDF reports. A report might take 3-4 minutes to generate. If you remove an instance while a report is being generated:

- Without delay (or delay = 0): Request is interrupted, user gets error, report is lost

- With delay (300 seconds): Request completes, report is generated, user gets their PDF, then instance is removed

Auto Scaling Groups

So far we’ve been manually creating EC2 instances and adding them to load balancers. But what happens when traffic increases? You’d need to manually launch more instances. And when traffic decreases? You’d be paying for instances you don’t need. That’s where Auto Scaling Groups come in.

What is an Auto Scaling Group?

An Auto Scaling Group (ASG) is a collection of EC2 instances that automatically scales based on demand. You define:

- Minimum number of instances (always running)

- Maximum number of instances (never exceed this)

- Desired capacity (target number of instances)

ASG automatically launches or terminates instances to maintain the desired capacity and respond to changes in demand.

Why is it important?

Let me give you a real scenario. You have a web application behind a load balancer. During normal hours, you need 2 instances. But during peak hours (maybe a sale or viral content), traffic spikes to 10x normal. Without Auto Scaling:

- Your 2 instances get overwhelmed

- Users experience slow response times or errors

- You manually launch more instances (takes time)

- By the time instances are ready, peak traffic might be over

With Auto Scaling:

- ASG detects high CPU/memory/traffic

- Automatically launches more instances (scales out)

- Load balancer distributes traffic to new instances

- When traffic drops, ASG terminates extra instances (scales in)

- You only pay for what you use

How it works with Load Balancers:

This is where it gets powerful. You create an ASG and attach it to a target group. Here’s the flow:

- ASG launches instances based on scaling policies

- Instances automatically register with the target group

- Load balancer starts sending traffic to new instances

- When scaling in, instances are deregistered from target group first (respects deregistration delay)

- Then instances are terminated

It’s a perfect combination - ASG handles instance management, load balancer handles traffic distribution.

Scaling policies:

ASG uses scaling policies to decide when to scale:

Target tracking:

- Maintain a specific metric at a target value (e.g., keep CPU at 50%)

- ASG automatically scales to maintain the target

- Simplest to configure

Step scaling:

- Define steps with different scaling actions

- If CPU > 50%, add 2 instances

- If CPU > 80%, add 5 instances

- More granular control

Simple scaling:

- Scale based on a single alarm

- If alarm triggers, scale up or down

- Wait for cooldown period before next action

Scheduled scaling:

- Scale at specific times (e.g., add instances before business hours)

- Useful for predictable traffic patterns

Predictive scaling:

- Uses machine learning to predict future demand based on historical patterns

- Scales proactively before traffic spikes (instead of reacting)

- Analyzes metrics over past 14 days to identify patterns

- Works best with consistent, predictable traffic patterns

- Can combine with target tracking (predictive for patterns, reactive for surprises)

Example scenario:

Let’s say you run an e-commerce site:

- Minimum: 2 instances (always running for baseline traffic)

- Desired: 2 instances (normal operation)

- Maximum: 10 instances (peak capacity)

Scaling policy: Target tracking - keep average CPU at 50%

What happens:

- Normal traffic: 2 instances running, CPU around 30-40%

- Traffic increases: CPU goes to 60%, ASG launches 2 more instances (now 4 total)

- Traffic spikes: CPU still high, ASG launches 2 more (now 6 total)

- Traffic decreases: CPU drops to 30%, ASG terminates 2 instances (back to 4)

- Back to normal: CPU stays low, ASG terminates 2 more (back to 2)

All automatic. You don’t do anything.

Health checks:

ASG performs health checks on instances:

- If an instance fails health check, ASG terminates it

- Launches a replacement instance automatically

- Keeps your desired capacity maintained

Launch template or configuration:

When ASG launches instances, it needs to know what to launch. You provide:

- Launch template: AMI, instance type, security groups, user data, etc.

- ASG uses this template to launch identical instances

Cooldown periods:

After a scaling action, ASG waits for a cooldown period before taking another action. This prevents rapid scaling up and down (thrashing).

Best practices:

- Set appropriate min/max values (don’t set max too high, costs can spiral)

- Use target tracking for most cases (simplest and most effective)

- Enable health checks (replace unhealthy instances)

- Use launch templates (easier to manage and update)

- Monitor scaling activities in CloudWatch

- Test your scaling policies (make sure they work as expected)

The combination:

Load balancer + Auto Scaling Group is a powerful combination:

- Load balancer distributes traffic evenly

- Auto Scaling ensures you have enough capacity

- Together, they provide scalable, highly available applications

Without Auto Scaling, you’re manually managing instances. With it, your infrastructure adapts to demand automatically. That’s why it’s so important - it makes your applications truly scalable and cost-effective.

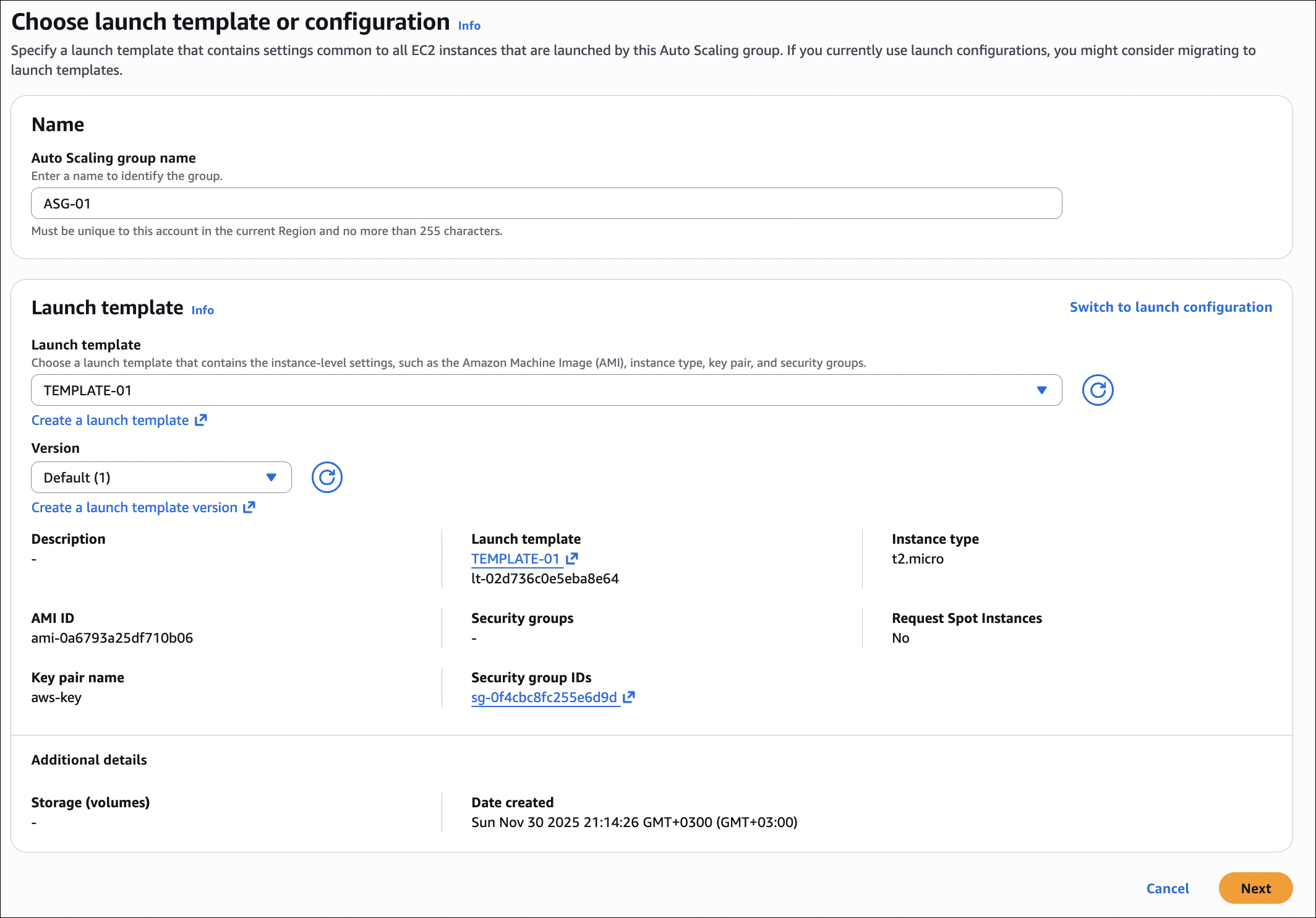

Hands-On: Creating Auto Scaling Group with Existing Load Balancer

Let’s create an Auto Scaling Group that automatically registers instances with our existing load balancer. We’ll use the same nginx user data script we used before.

Step 1: Create Launch Template

First, we need a launch template that defines what instances ASG should launch:

- Go to EC2 → Launch Templates → Create launch template

- Name:

web-server-template - Description: Template for web servers with nginx

- AMI: Amazon Linux 2023

- Instance type: t2.micro

- Key pair: Select your key pair

- Network settings:

- VPC: Select your VPC

- Subnet: Don’t select (ASG will choose)

- Security group: Select the security group that allows HTTP from load balancer

- Advanced details → User data: Paste the nginx script:

1

2

3

4

5

6

7

8

9

10

11

12

13

#!/bin/bash

set -ex

dnf update -y

dnf install -y nginx

echo "<h1>Hostname: $(hostname)</h1>" > /usr/share/nginx/html/index.html

systemctl enable nginx

systemctl start nginx

- Click Create launch template

Step 2: Create Auto Scaling Group

Now let’s create the ASG:

- Go to EC2 → Auto Scaling Groups → Create Auto Scaling group

- Name:

web-servers-asg - Launch template: Select

web-server-template - Click Next

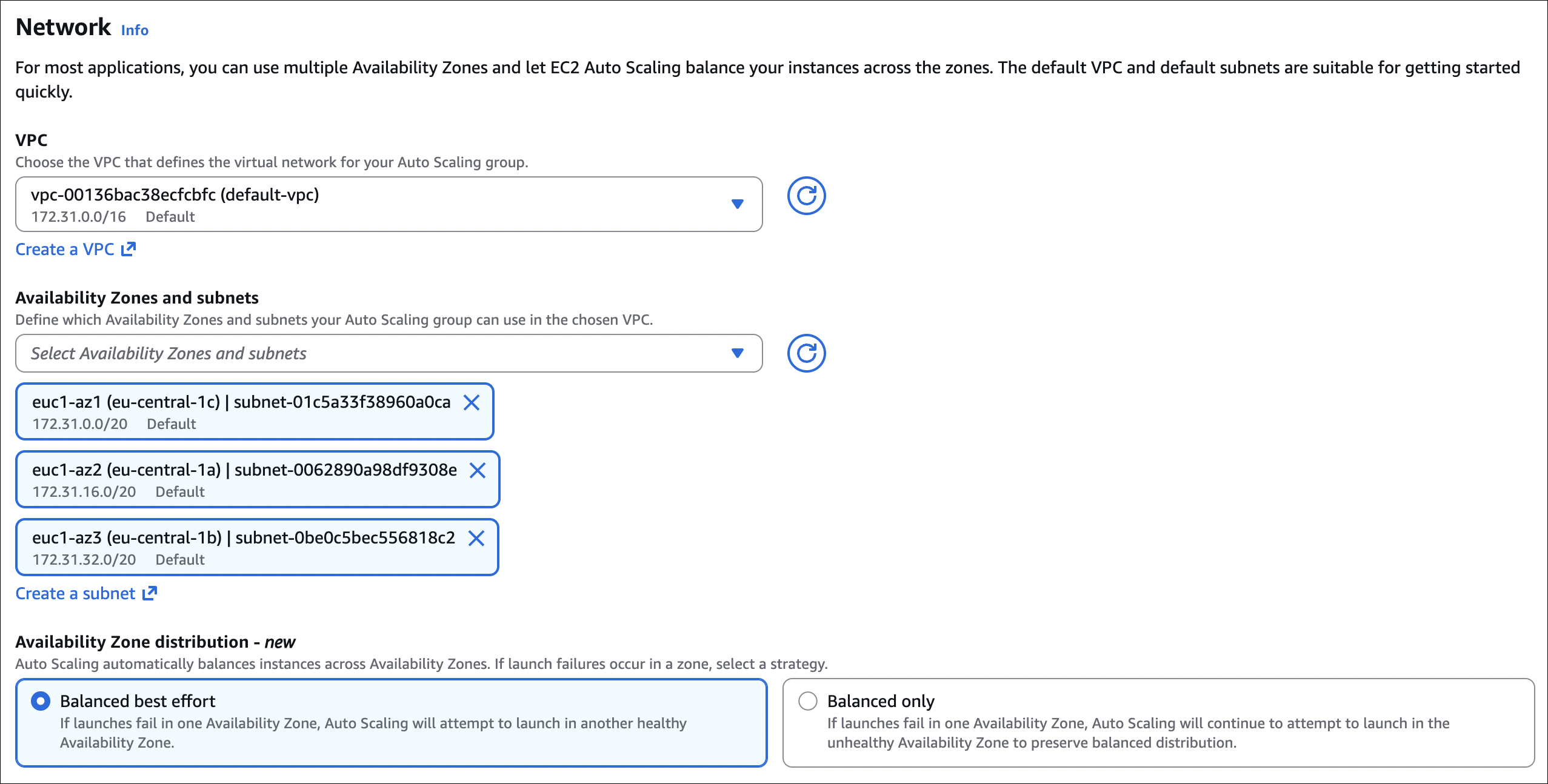

Configure settings:

- VPC: Select your VPC

- Availability Zones and subnets: Select at least 2 subnets in different AZs

- Click Next

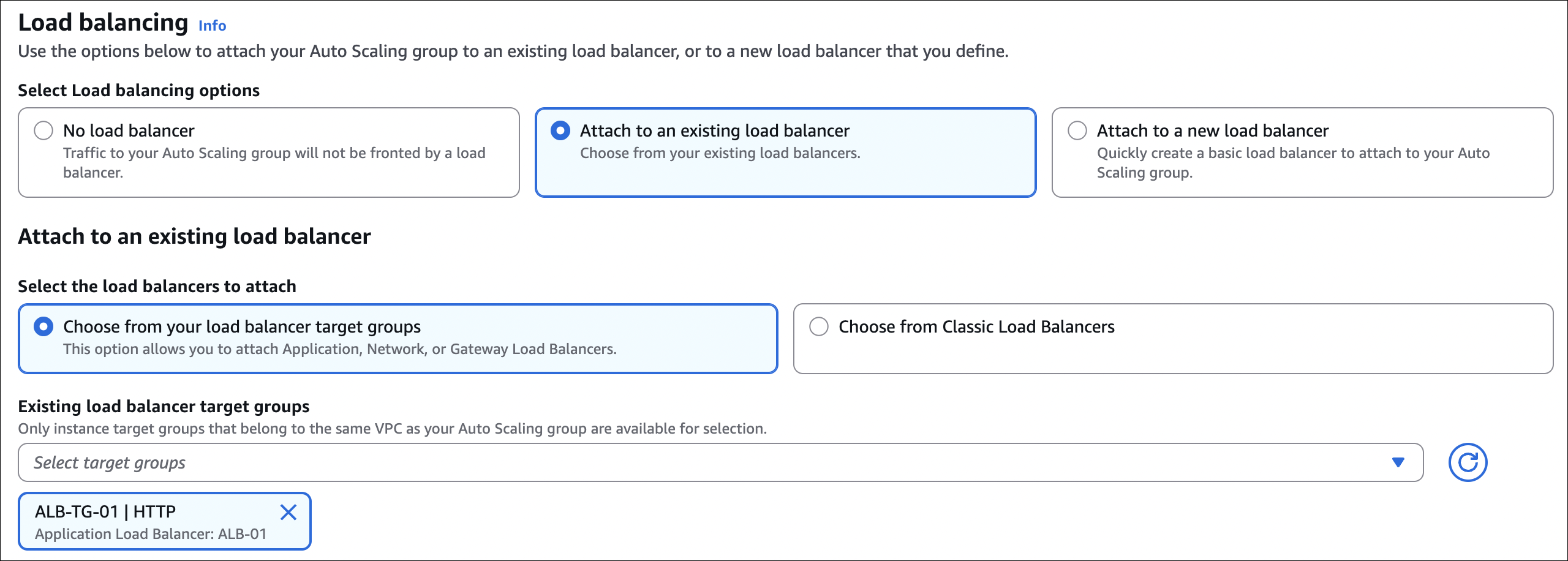

Integrate with other services:

- Load balancing: Select Attach to an existing load balancer

- Choose a target group: Select your existing target group (e.g.,

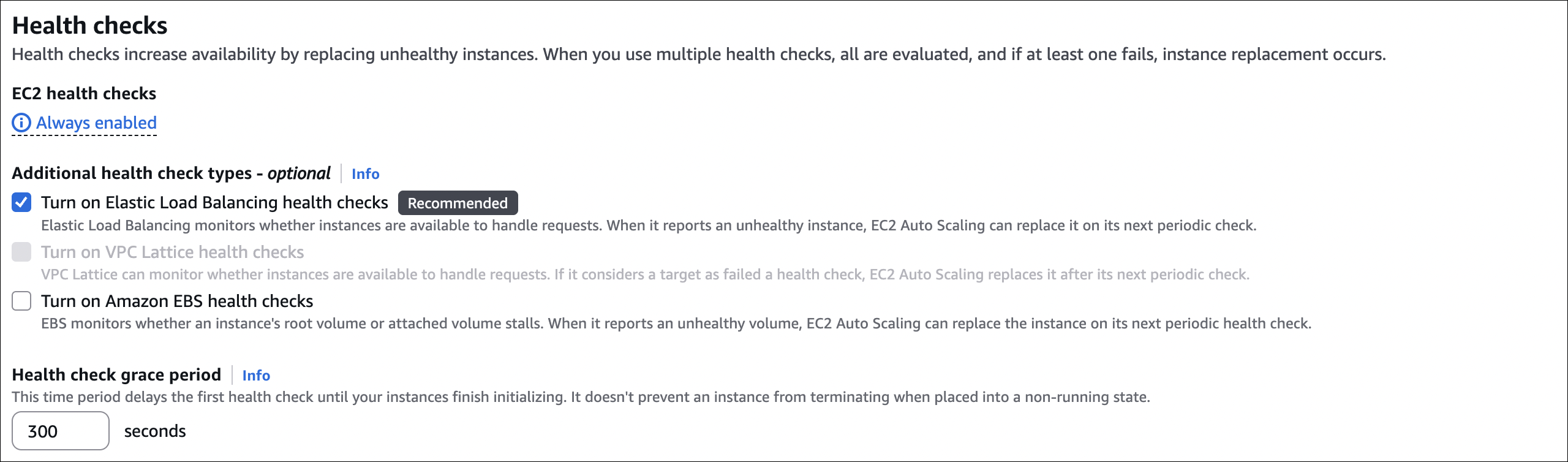

web-servers-tg) - Health check type: Select ELB (this enables load balancer health checks)

- Health check grace period: 300 seconds (time for instance to become healthy)

- Click Next

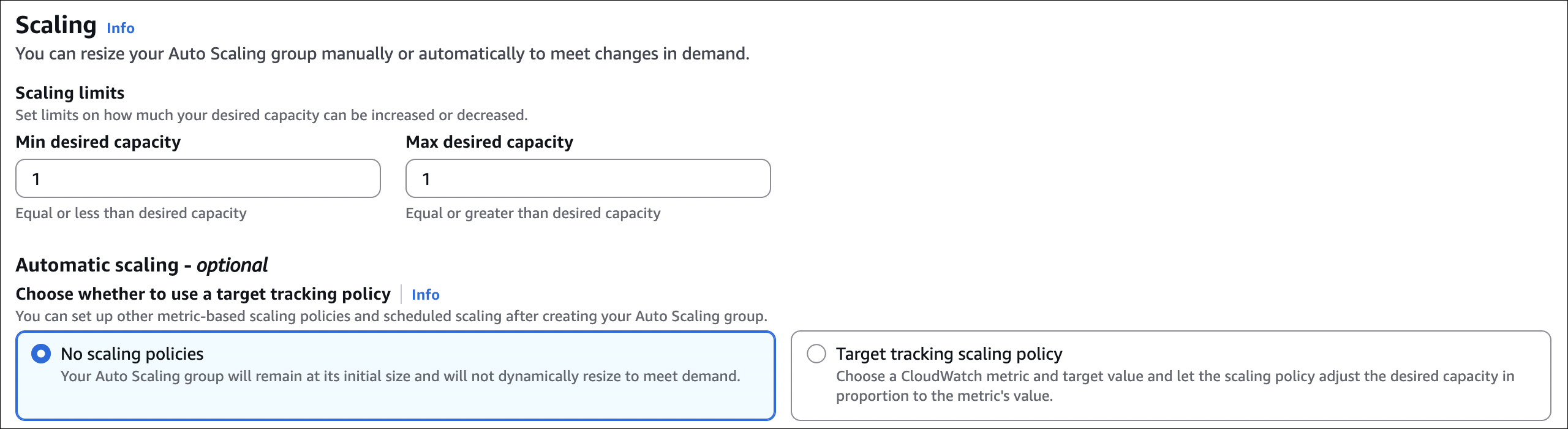

Configure group size and scaling policies:

- Desired capacity: 1

- Minimum capacity: 1

- Maximum capacity: 1

- Scaling policies: None (we’ll test manual scaling first)

- Click Next

Add notifications (optional): Skip for now

- Click Next

Add tags (optional): Add tags if needed

- Click Next

Review and create:

- Review settings

- Click Create Auto Scaling group

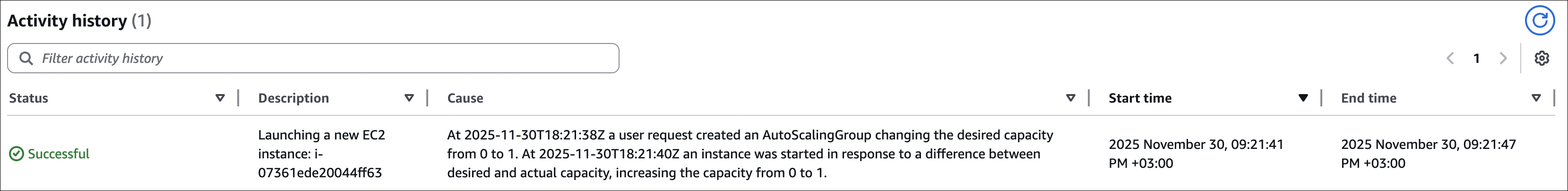

Step 3: Verify Instance Creation

Wait a minute or two for ASG to launch the instance:

- Go to EC2 → Auto Scaling Groups → Select

web-servers-asg - Go to Activity tab

- You should see “Launching a new EC2 instance” activity

- Wait for status to change to “Successful”

Check target group:

- Go to EC2 → Target Groups → Select your target group

- Go to Targets tab

- You should see the instance automatically registered

- Wait for it to become “healthy” (may take a minute)

The instance was automatically created by ASG and registered with the target group. No manual steps needed!

Step 4: Test the Setup

Test that everything works:

1

2

$ curl http://your-alb-dns-name/

<h1>Hostname: ip-xxx-xxx-xxx-xxx</h1>

The instance created by ASG is now serving traffic through the load balancer.

Step 5: Scale Up Manually

Now let’s scale up to see ASG in action:

- Go to EC2 → Auto Scaling Groups → Select

web-servers-asg - Go to Details tab

- Click Edit

- Change Desired capacity: 2

- Change Maximum capacity: 2

- Click Update

Watch the activity:

- Go to Activity tab

- You should see a new activity: “Launching a new EC2 instance”

- Wait for it to complete (status: “Successful”)

Check target group again:

- Go to EC2 → Target Groups → Select your target group

- Go to Targets tab

- You should now see 2 instances

- Both should become “healthy” after a minute

Test load balancing:

1

2

3

4

5

$ curl http://your-alb-dns-name/

<h1>Hostname: ip-xxx-xxx-xxx-xxx</h1>

$ curl http://your-alb-dns-name/

<h1>Hostname: ip-yyy-yyy-yyy-yyy</h1>

You should see different hostnames, showing that both instances are receiving traffic.